I can be Apple, and so can you

A Public Disclosure of Issues Around Third Party Code Signing Checks

Summary:

- A bypass found in third party developers’ interpretation of code signing API allowed for unsigned malicious code to appear to be signed by Apple.

- Known affected vendors and open source projects have been notified and patches are available.

- However, more third party security, forensics, and incident response tools that use the official code signing APIs are possibly affected.

- Developers are responsible for using the code signing API properly, POCs are released to help developers test their own code.

- The bypass affects Fat/Universal file format and the lack of verification of nested formats.

- Affects only macOS and older versions of OSX.

Affected Vendors:

- VirusTotal – CVE-2018-10408

- Google – Santa, molcodesignchecker – CVE-2018-10405

- Facebook – OSQuery - CVE-2018-6336

- Objective Development – LittleSnitch – CVE-2018-10470

- F-Secure - xFence (also LittleFlocker) CVE-2018-10403

- Objective-See – WhatsYourSign, ProcInfo, KnockKnock, LuLu, TaskExplorer (and others). – CVE-2018-10404

- Yelp - OSXCollector – CVE-2018-10406

- Carbon Black – Cb Response – CVE-2018-10407

The Importance of Code Signing and How it Works on *OS

Code signing is a security construct that uses public key infrastructure to digitally sign compiled code or even scripting languages to ensure a trusted origin and to ensure that the deployed code has not been modified. On Windows you can cryptographically sign just about everything from .NET binaries to PowerShell scripts. On macOS/iOS, code signing focuses on the Mach-O binary and application bundles to ensure only trusted code is executed in memory.

Security, incident response, and forensics processes and personnel use code signing to weed out trusted code from untrusted code. By verifying signed code, detection and response personnel can speed up investigations by separating trusted code from untrusted code. Different types of tools and products use code signing to implement actionable security; this includes whitelisting, antivirus, incident response, and threat hunting products. To undermine a code signing implementation for a major OS would break a core security construct that many depend on for day to day security operations.

Code signing is not without its problems(1, 2, 3, 4, 5). Unlike some of the prior work, this current vulnerability does not require admin access, does not require JIT’ing code, or memory corruption to bypass code signing checks. All that is required is a properly formatted Fat/Universal file and code signing checks return valid.

Vulnerability Details

This vulnerability exists in the difference between how the Mach-O loader loads signed code vs how improperly used Code Signing APIs check signed code and is exploited via a malformed Universal/Fat Binary.

What is a Fat/Universal file?

A Fat/Universal file is a binary format that contains several Mach-O (an executable, dyld, or bundle) files with each targeting a specific native CPU architecture (example: i386, x86_64, or PPC).

Conditions for the vulnerability to work:

- The first Mach-O in the Fat/Universal file must be signed by Apple, can be i386, x86_64, or even PPC.

- The malicious binary, or non-Apple supplied code, must be adhoc signed and i386 compiled for an x86_64 bit target macOS.

- The CPU_TYPE in the Fat header of the Apple binary must be set to an invalid type or a CPU Type that is not native to the host chipset.

Without passing the proper SecRequirementRef and SecCSFlags, the code signing API (SecCodeCheckValidity) will check the first binary in the Fat/Universal file for who signed the executable (e.g. Apple) and verify no tampering via the cryptographic signature; then the API will check each of the following binaries in the Fat/Universal file to ensure the Team Identifiers match and verify no tampering via containing cryptographic signature but without checking the CA root of trust. The reason the malicious code, or “unsigned” code, must be i386, is that the code signing API has a preference for the native CPU architecture (x86_64) for code signing checks and will default to checking the unsigned code if it is x86_64.

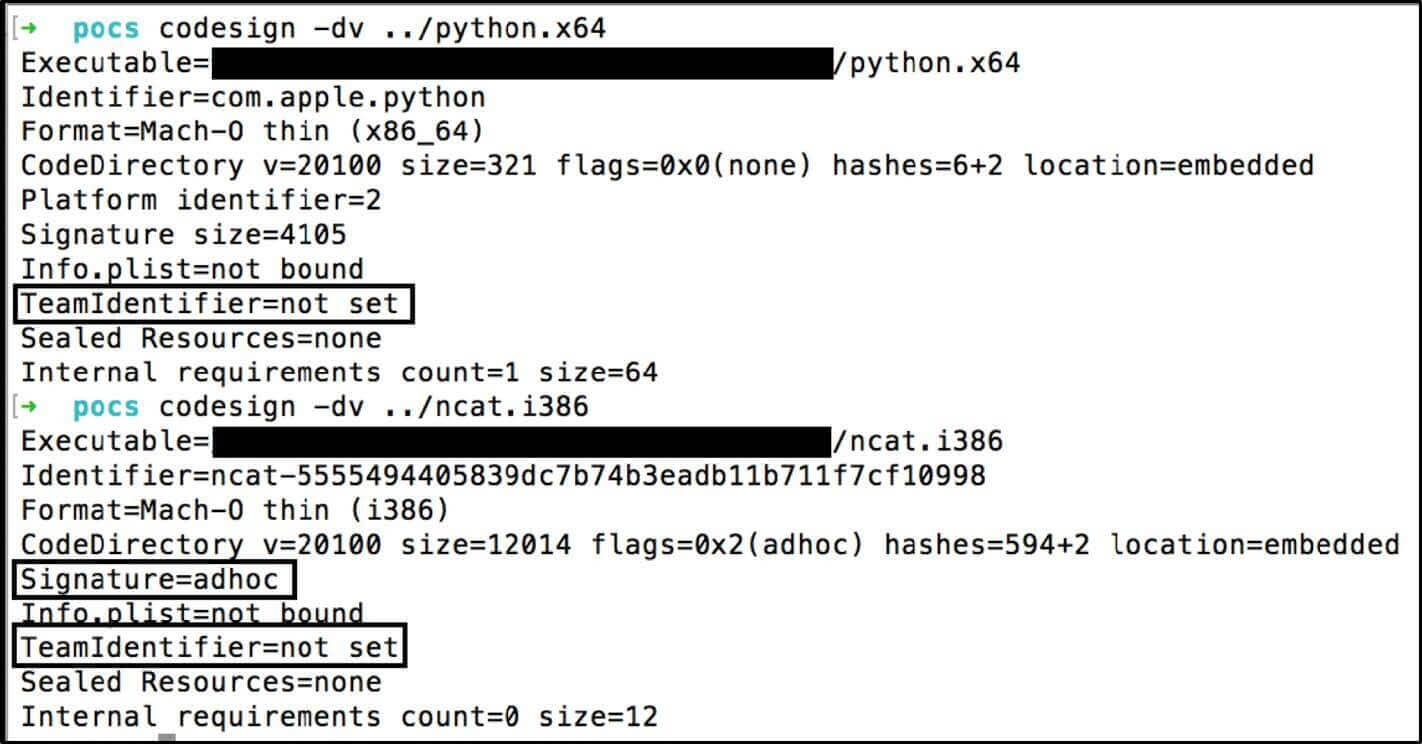

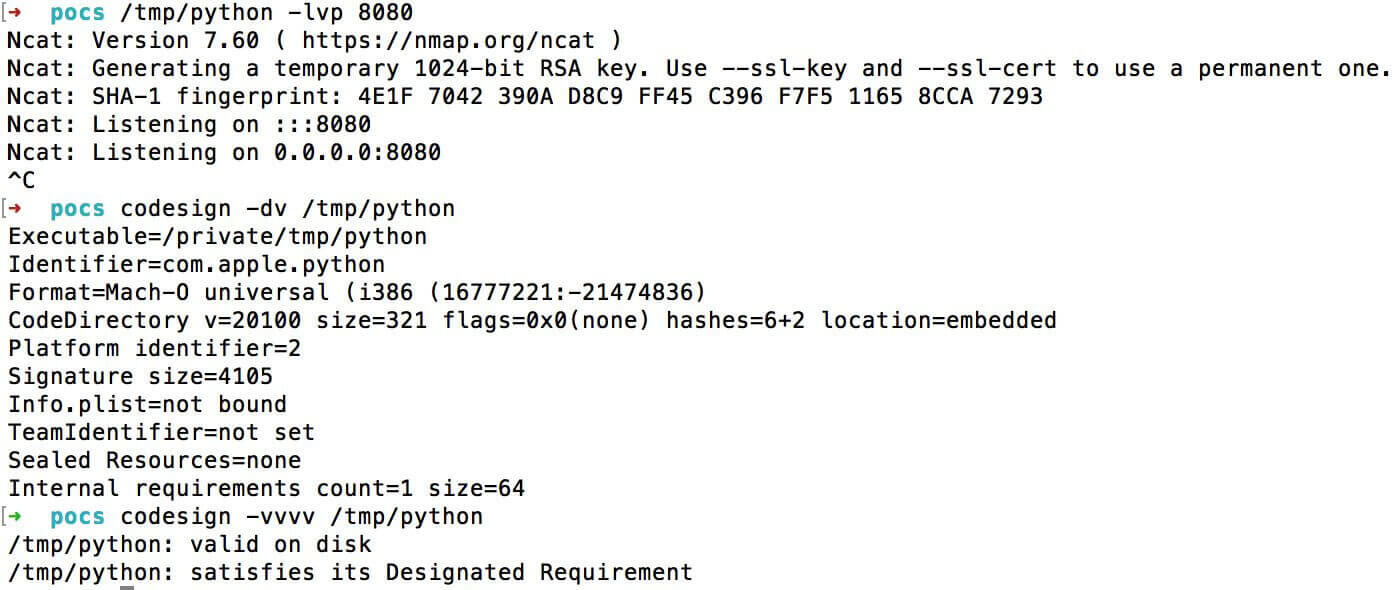

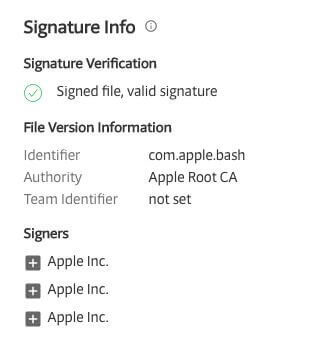

Part of the reason why adhoc signing of the malicious code works is because the TeamIdentifier equals “not set” even for core Apple signed binaries. Figure 1 shows a valid Apple signed Mach-O (python.x64) vs an adhoc signed Mach-O (ncat.i386) where both `TeamIdentifier=not set`.

For example, I used a developer ID to sign a file and tried using lipo to create a single Fat/Universal file with an Apple signed binary. This failed to verify with codesign when used.

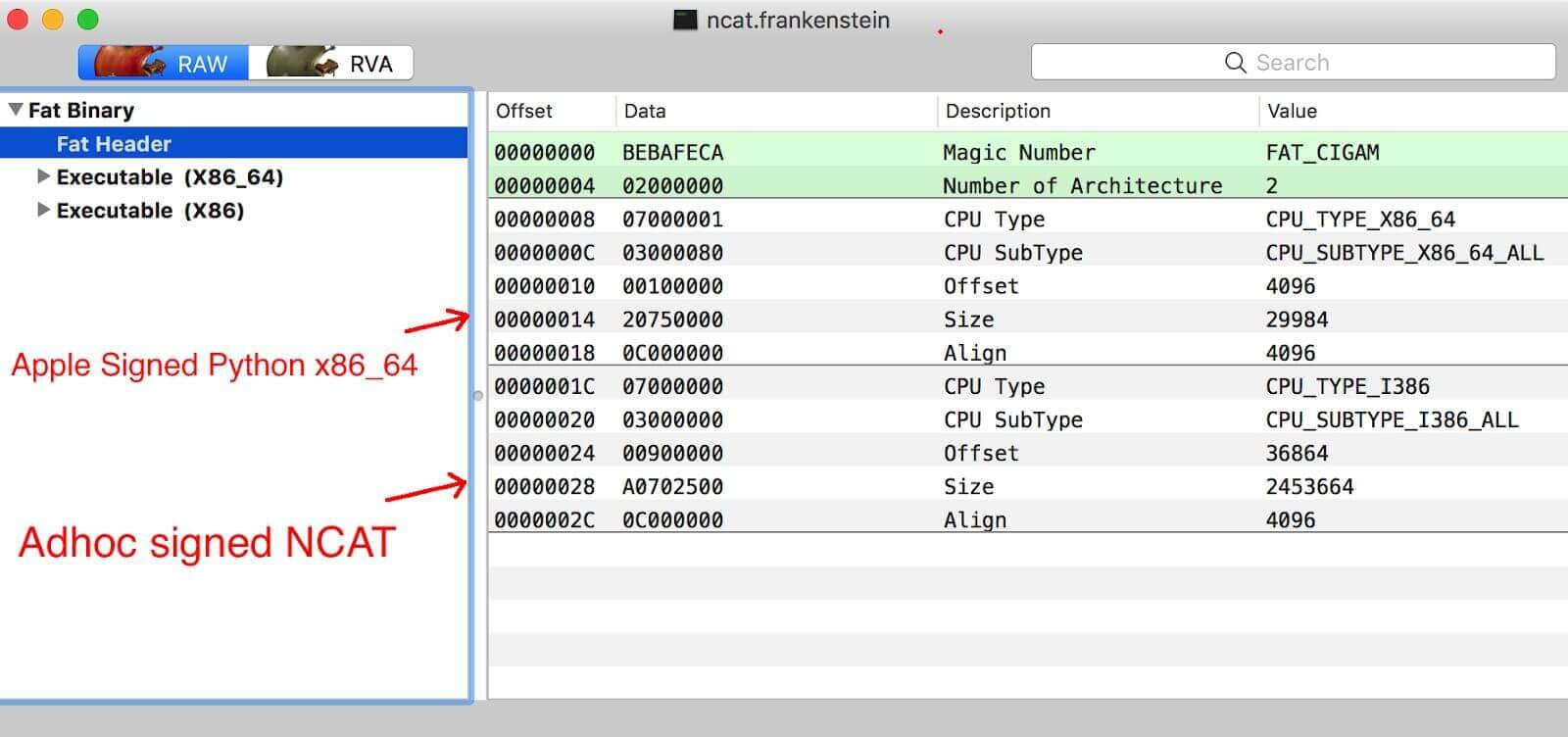

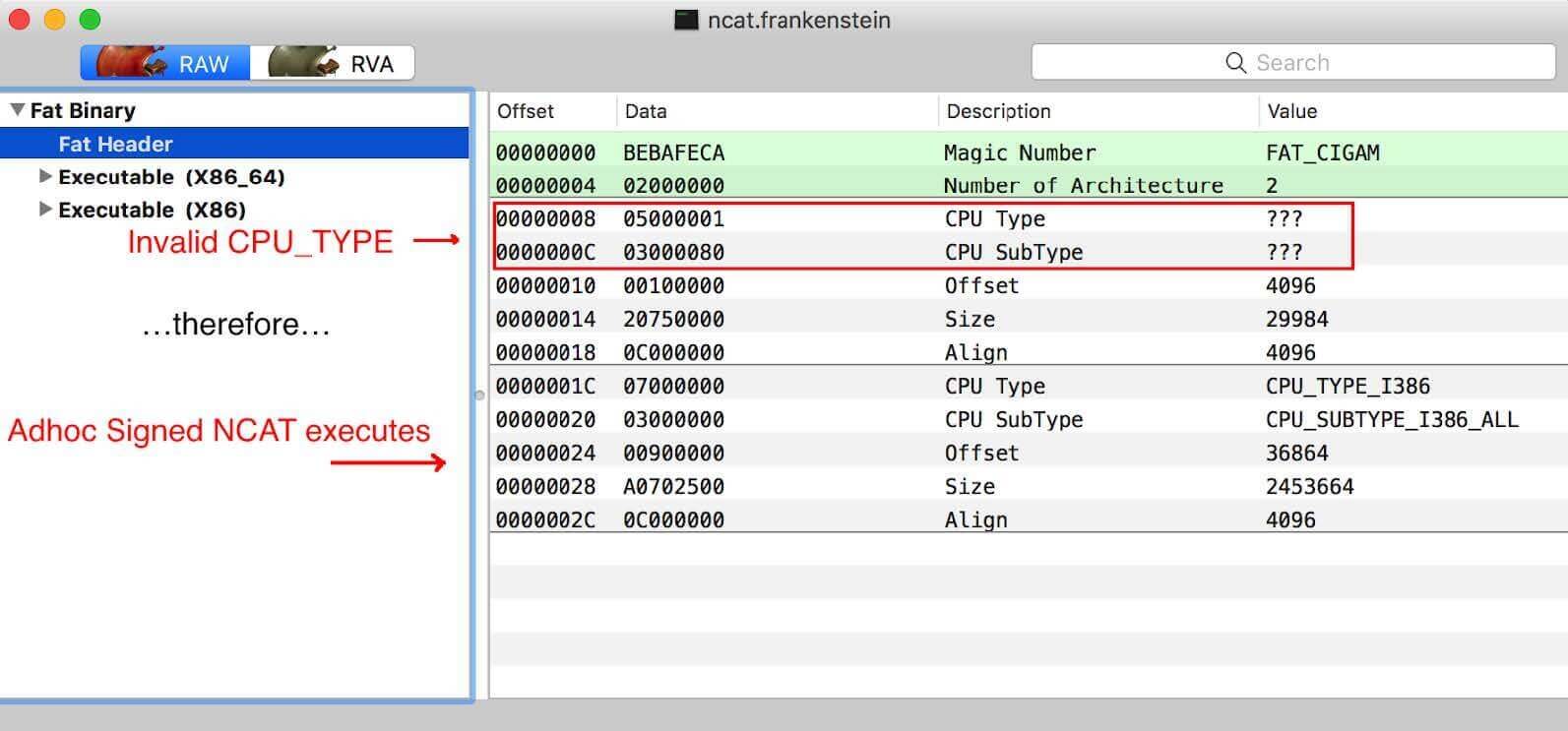

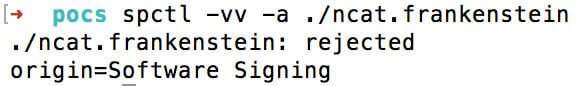

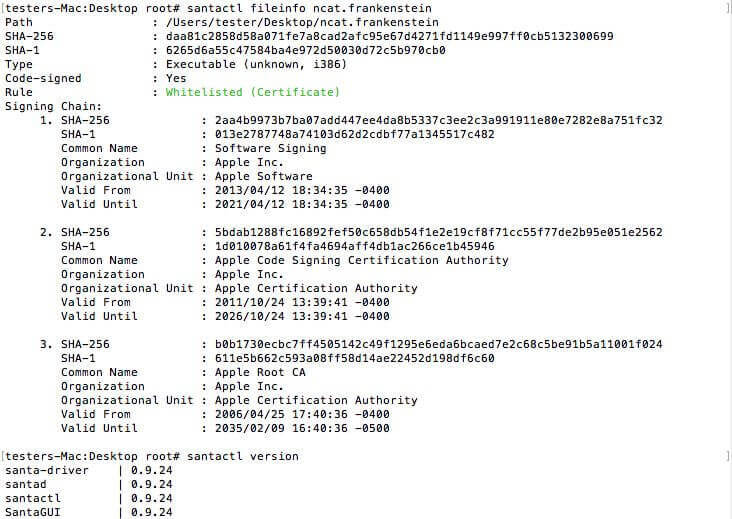

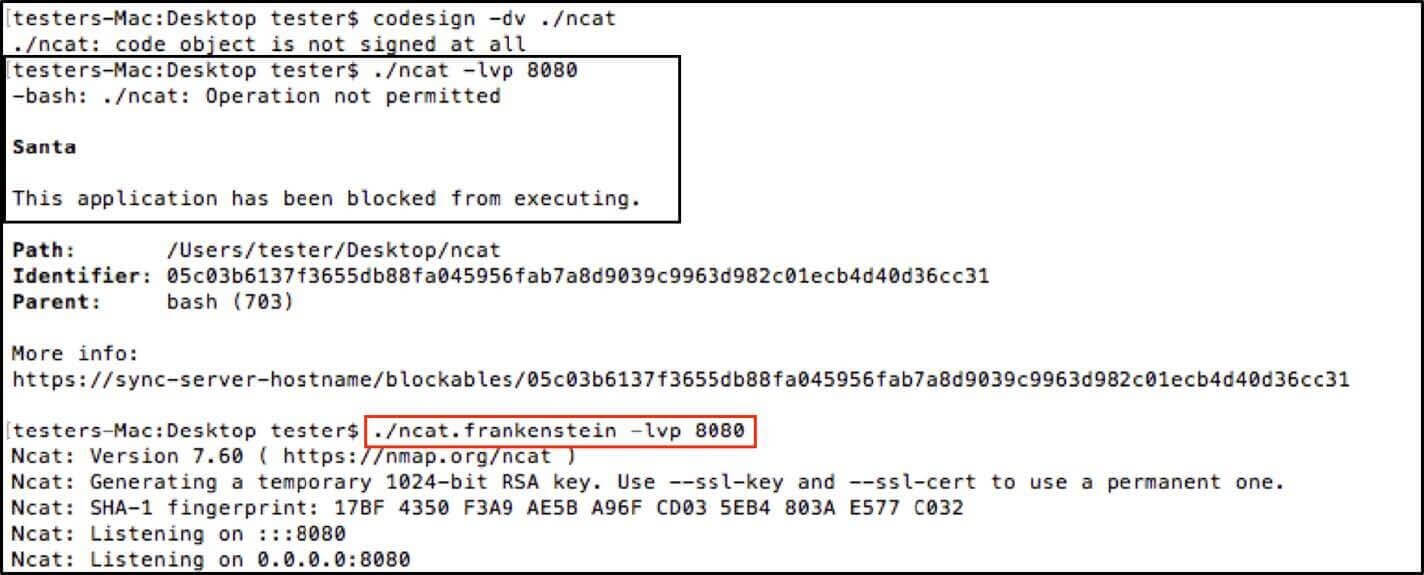

My initial POC, was a ncat (from nmap) example I called ncat.frankenstein, where I added the python x86_64 Apple-signed binary and ncat i386 adhoc signed binary into one Fat file. Creating an adhoc signed binary is as easy as `codesign -s - target_mach-o_or_fat_binary`. If viewed in MachOView:

At this point if I attempt to execute this file, the python x86_64 binary will execute:

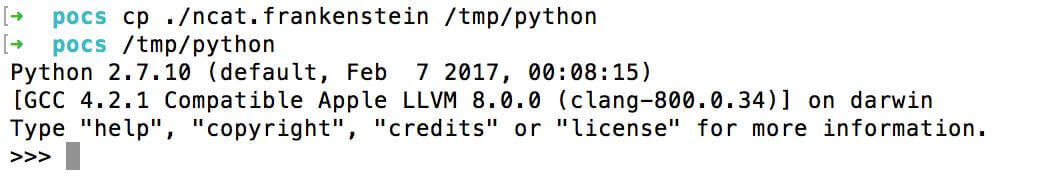

And the code signature verifies as valid:

How can I have the self-signed/adhoc-signed ncat binary executed?

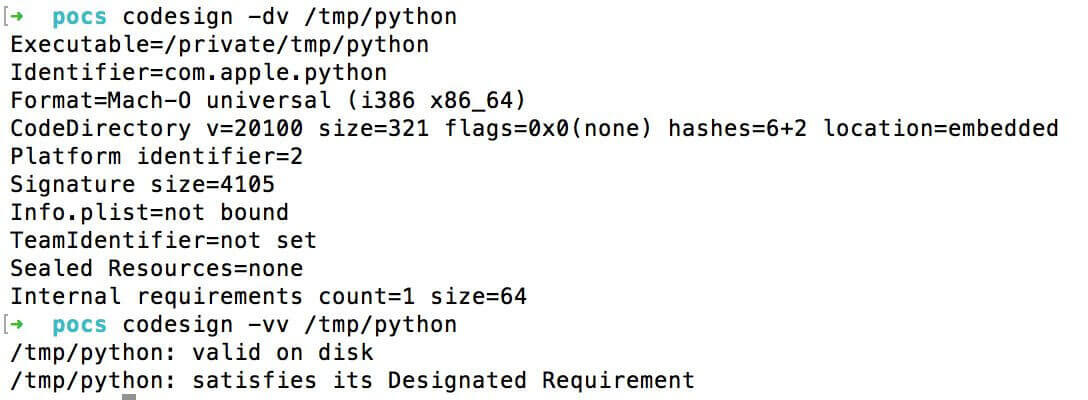

By setting the CPU_Type to an invalid type or valid not native CPU type (example: PPC), the Mach-O loader will skip over the validly signed Mach-O binary and execute the malicious (non-Apple signed) code:

And then ncat.frankenstein will execute and the strict checking result will show as ‘valid’:

We are providing the ncat.frankenstein example and four other examples for developers to test if their products are affected.

Sample Name | SHA-256 Hash | Notes |

|---|---|---|

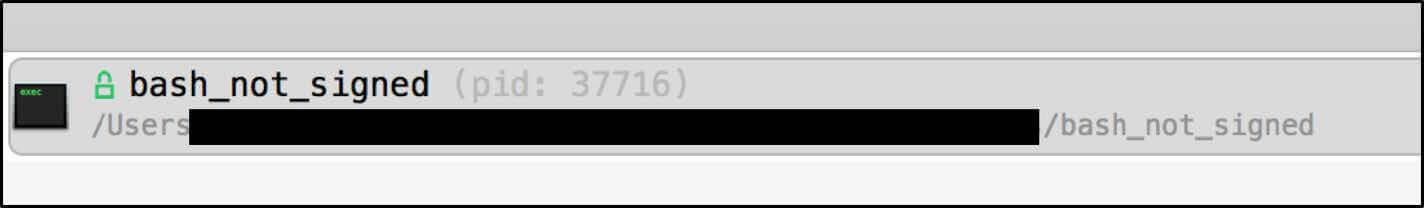

| bash_not_signed | 5daba6c40be028d2139d1c7246f7ea2d86adedf44daf05f7be47307ebc6a572e | Sample Not signed, malformed header |

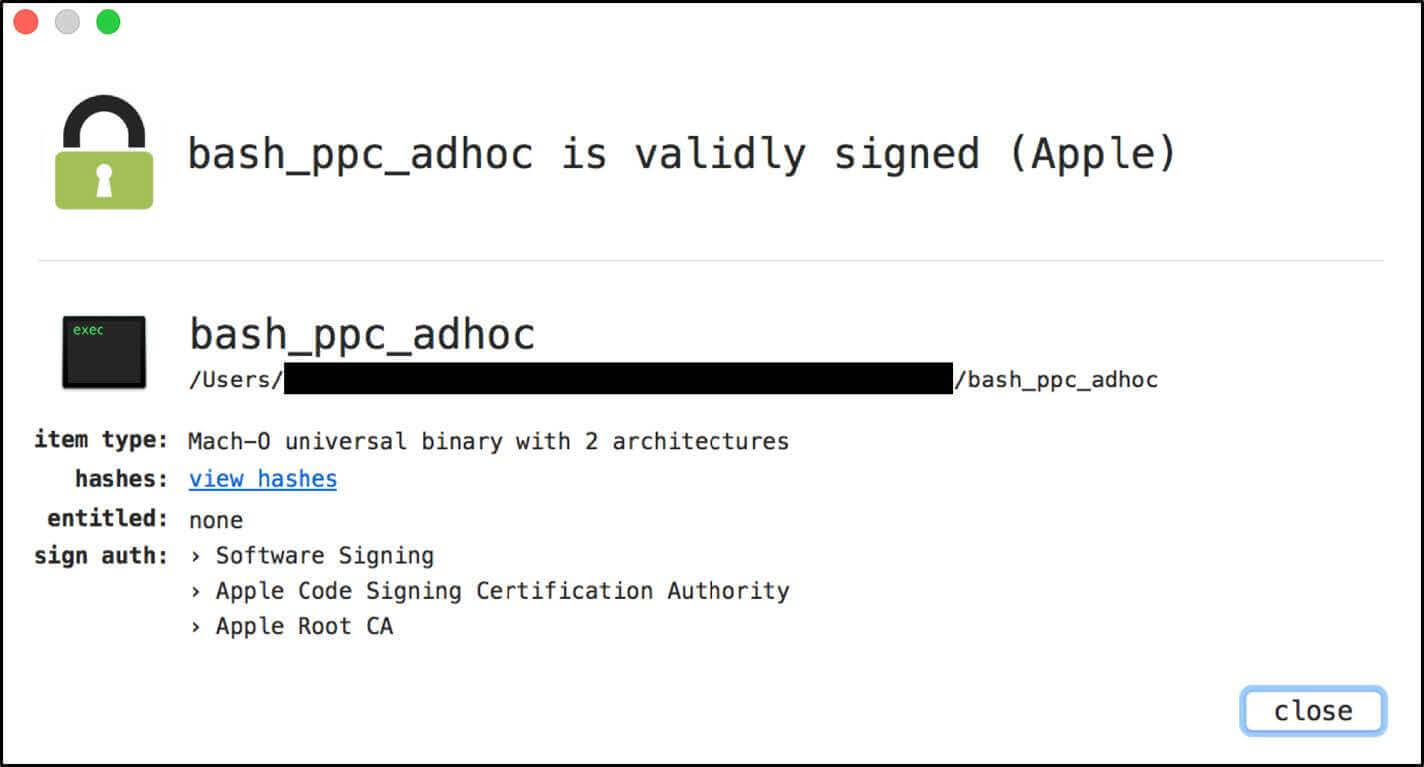

| Bash_ppc_adhoc | 67884b18faa1225903a3cc0a5ec6acc8b73b2b0d61d0935eb6510de11abd0cc7 | PPC, adhoc signed, non-malformed header |

| Bash_adhoc | 9dc5b06c50a2566de5fc695f1d18fc2c5efb273fd4e957db68708c052f805751 | Adhoc signed, malformed header |

| Terminal.app (requires Sierra macOS 10.12 to execute) | Terminal.app.zip 9b33dc17555663b378fe7ea698be0cb857fc739c23fc6a46b39617228b04c0cc | Application Bundle, Adhoc signed, malformed header |

| ncat.frankenstein | daa81c2858d58a071fe7a8cad2afc95e67d4271fd1149e997ff0cb5132300699 | Adhoc signed, malformed header |

Recommendation:

Via Command line

It depends on how you check signed code. If you use codesign, you are probably already familiar with the following commands:

- `codesign –dvvvv` - This will dump the CA authority and TeamIdentifier (developer ID).

- `codesign –vv` - This will do strict checking and validate all architectures.

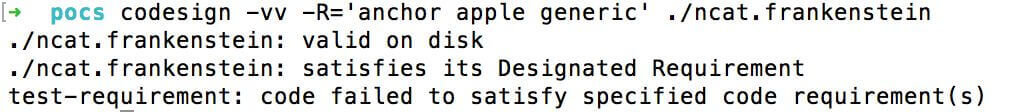

However, to properly check for this type of abuse you need to add an anchor certificate requirement via the following commands:

- codesign -vv -R='anchor apple' ./some_application_or_mach-o # for Apple signed code

- codesign -vv -R='anchor apple generic' ./some_application_or_mach-o # for Apple signed code and Apple developer signed code

Doing so will result in a proper failure to satisfy the requirement:

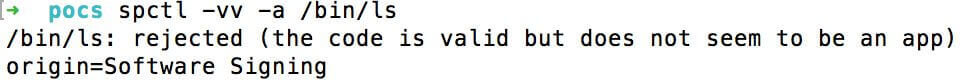

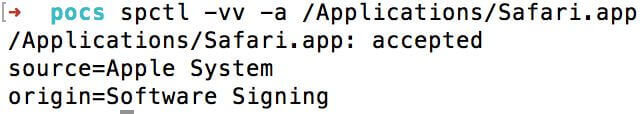

The command `spctl` could be used, but careful analysis of the command output is required. For example, a valid Apple Signed Mach-O binary /bin/ls and Safari returns the following:

With a normal output from a valid Apple signed application bundle:

Notice the following string “(the code is valid but does not seem to be an app)” for /bin/ls while being rejected. Conversely, the ncat.frankenstein Fat/Universal file:

With the ncat.frankenstein Fat/Universal file there is no output stating that the code is valid. So, I cannot recommend spctl for manual verification of standalone Mach-O binaries, just use codesign with the requirements flag.

For developers

Typically, a developer would check the a Mach-O binary or Fat/Universal binary with the following APIs SecStaticCodeCheckValidityWithErrors() or SecStaticCodeCheckValidity() with the following flags:

These flags are supposed to ensure that all the code in a Mach-O or Fat/Universal file that is loaded into memory is cryptographically signed. However, these APIs fall short by default, and third party developers will need to carve out and verify each architecture in the Fat/Universal file and verify that the identities match and are cryptographically sound.

The best way to check each nested architecture in a Fat/Universal file is to first call SecRequirementCreateWithString with the requirement “anchor apple” then SecStaticCodeCheckValidity with the following flags kSecCSDefaultFlags | kSecCSCheckNestedCode | kSecCSCheckAllArchitectures | kSecCSEnforceRevocationChecks with the requirement reference; as demoed in Patrick Wardle’s patched WhatsYourSign source code.

By passing “anchor apple” to the SecRequirmentCreateWithString function, this acts in a similar way to codesign -vv -R=’anchor apple’ requiring the Apple Software Signing chain of trust across all nested binaries in the Fat/Universal file. Furthermore by passing the noted flags and the requirement to SecStaticCodeCheckValidity, all architectures are checked for the requirement and revocations checks are applied.

Proof of Concepts:

Apple’s ‘codesign’ tool and why one should use the requirements flag (-R).

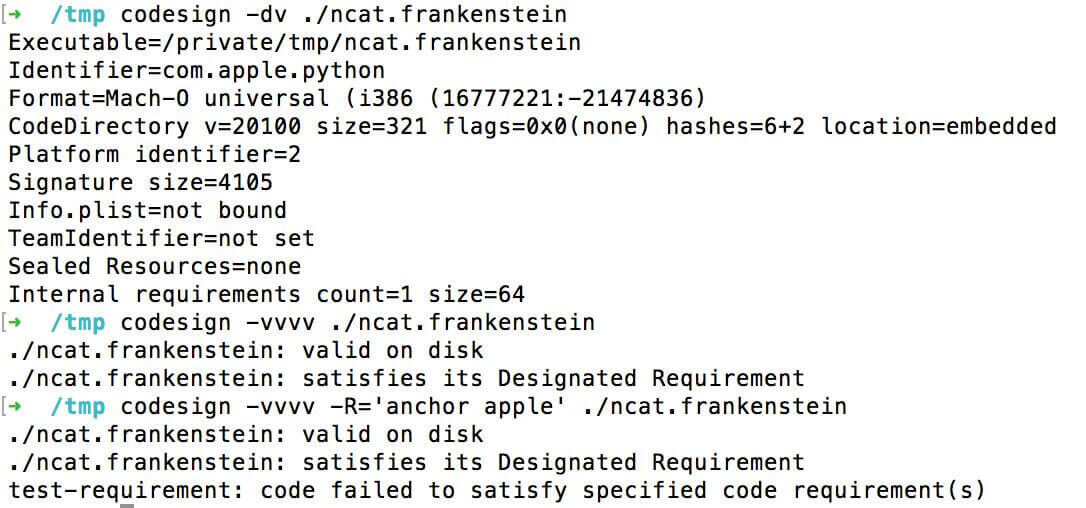

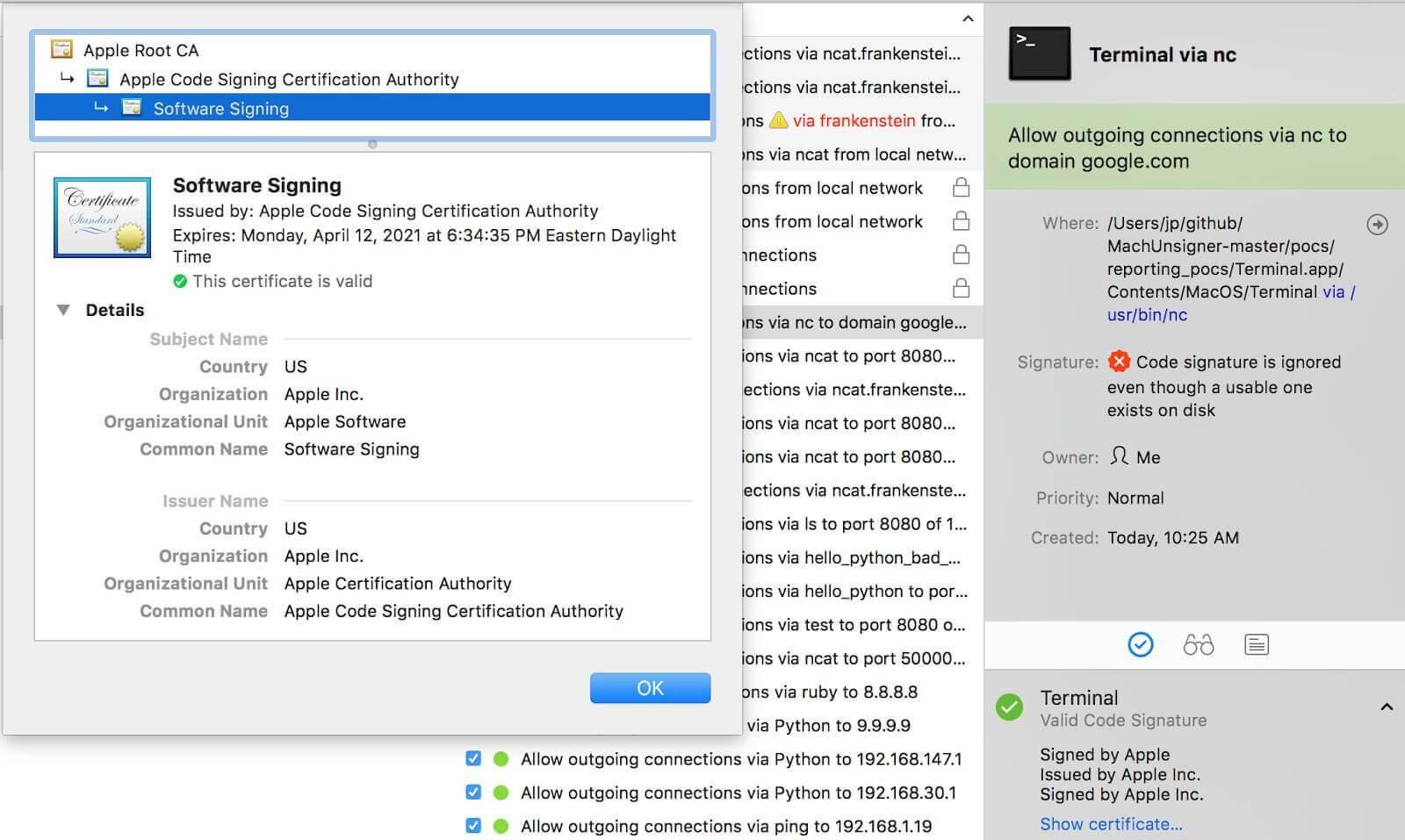

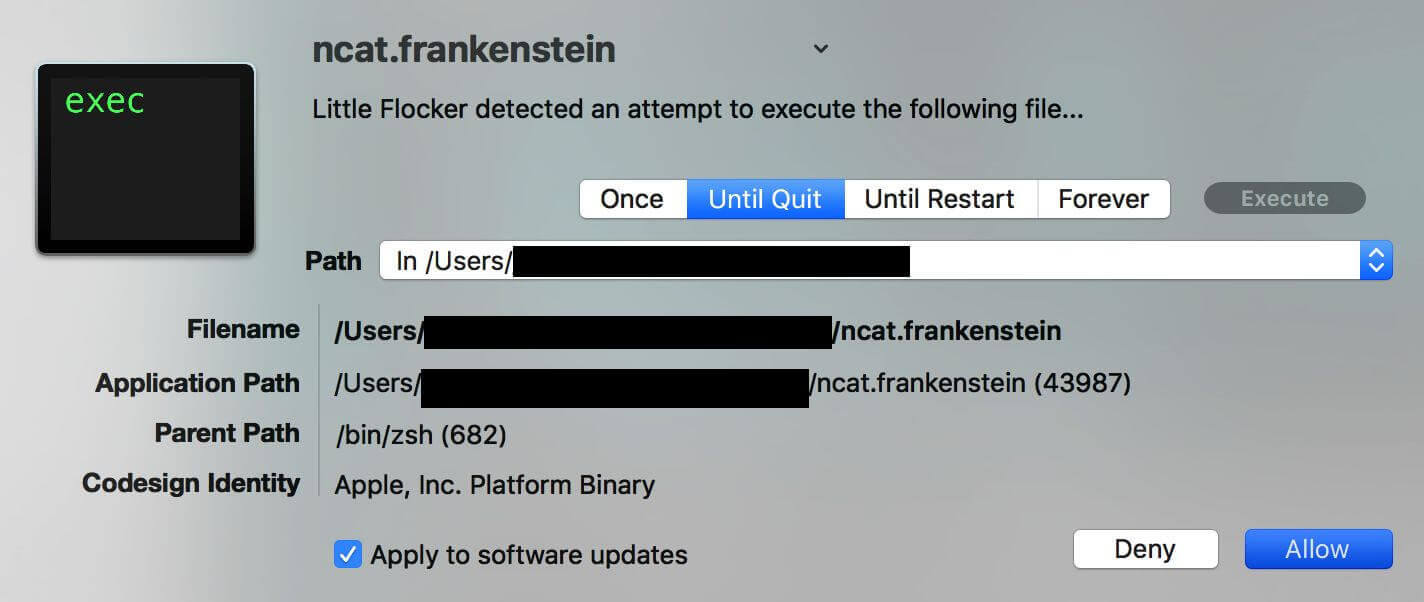

LittleSnitch – Verification of the Fat/Universal file failed on-disk, however, LittleSnitch does correctly check the process mapped into memory.

LittleFlocker – F-Secure bought LittleFlocker and is now xFence.

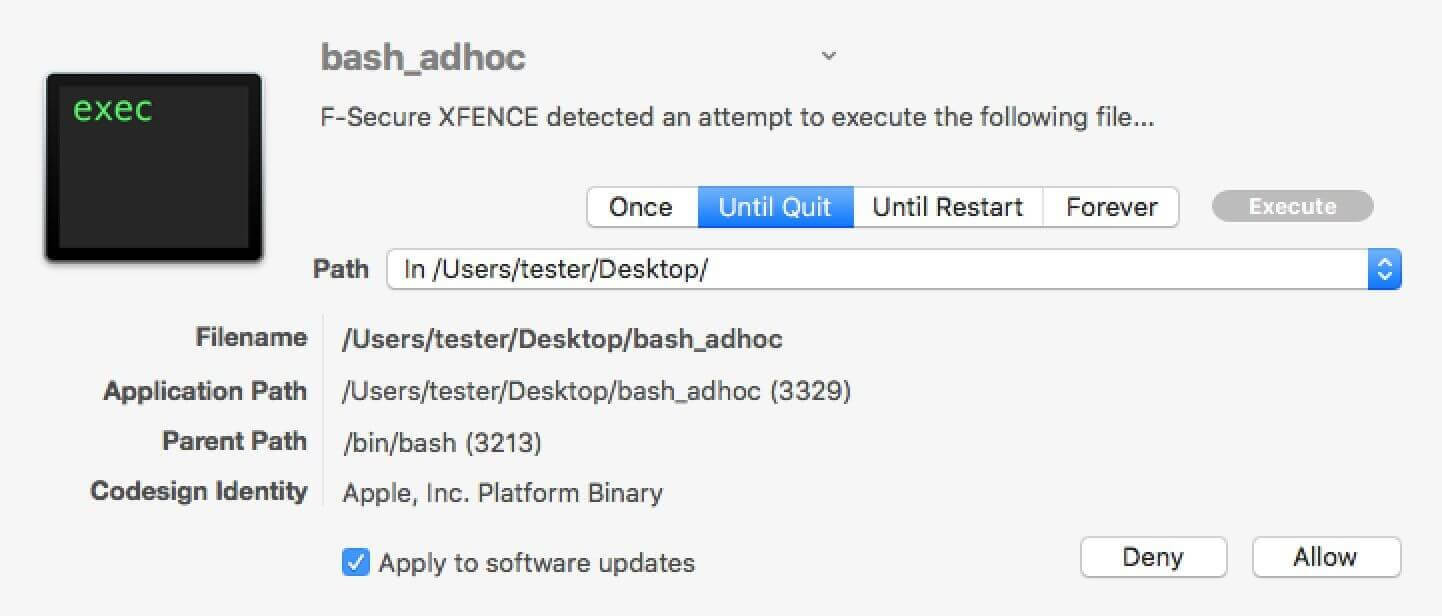

F-Secure xFence (formerly LittleFlocker)

Objective-See Tools

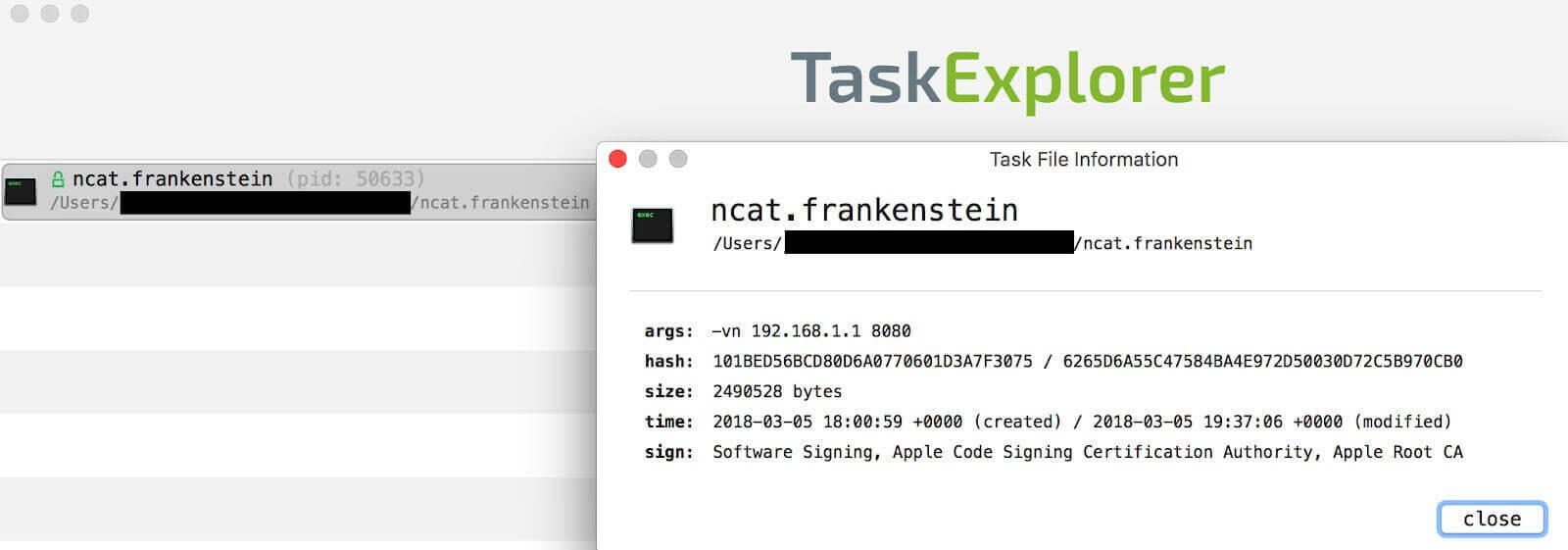

- TaskExplorer

- WhatsYourSign

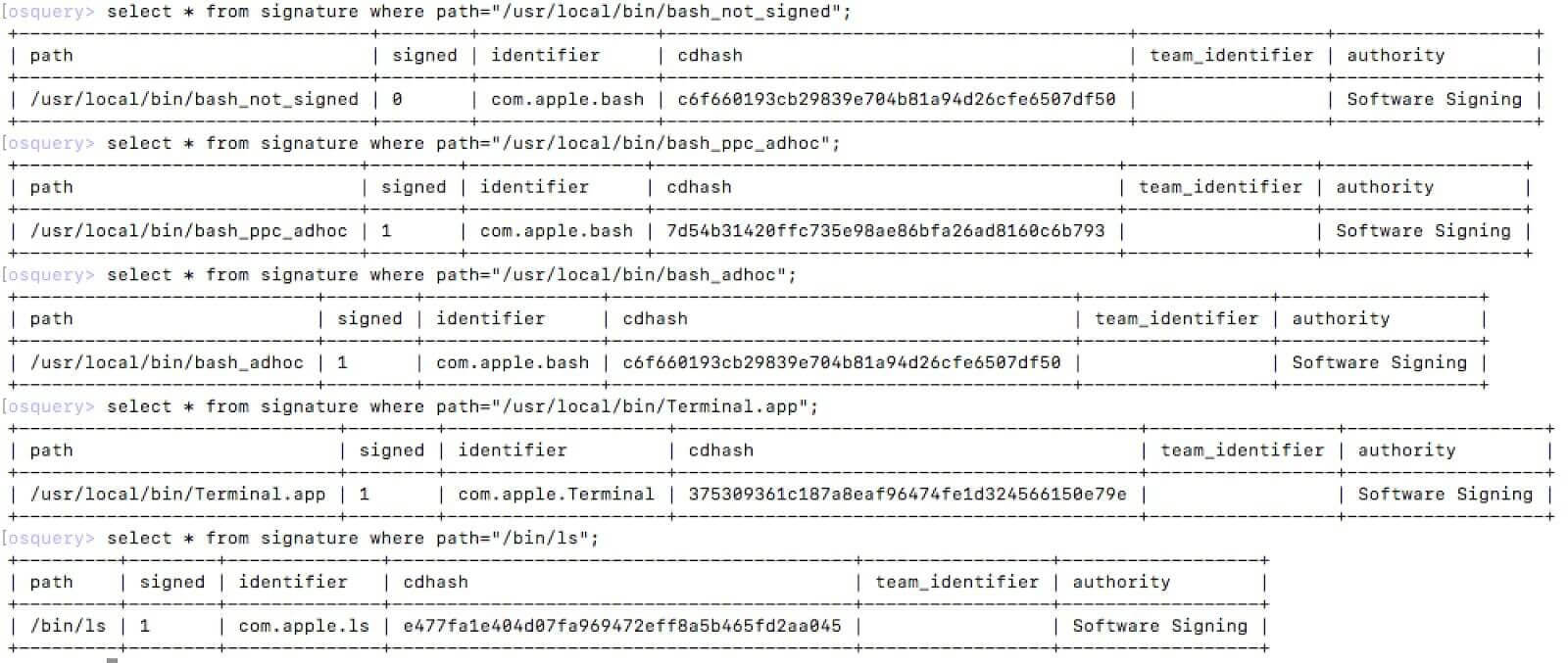

Facebook OSquery – checks against the malicious samples with /bin/ls as a valid example.

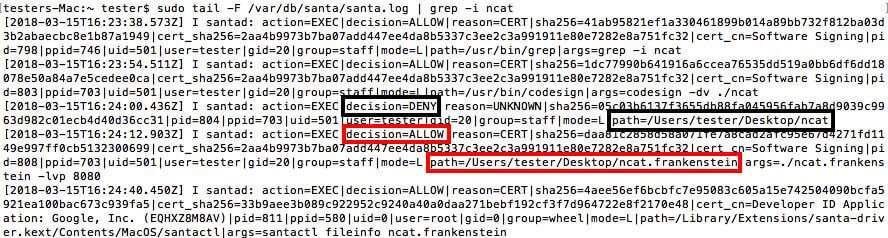

Google Santa – Fileinfo output showing the ncat.frankenstein is whitelisted.

Showing the execution deny of ncat (unsigned) and a execution allow of ncat.frankenstein:

Detailed output of santa.log showing event actions on prior examples:

Carbon Black Response

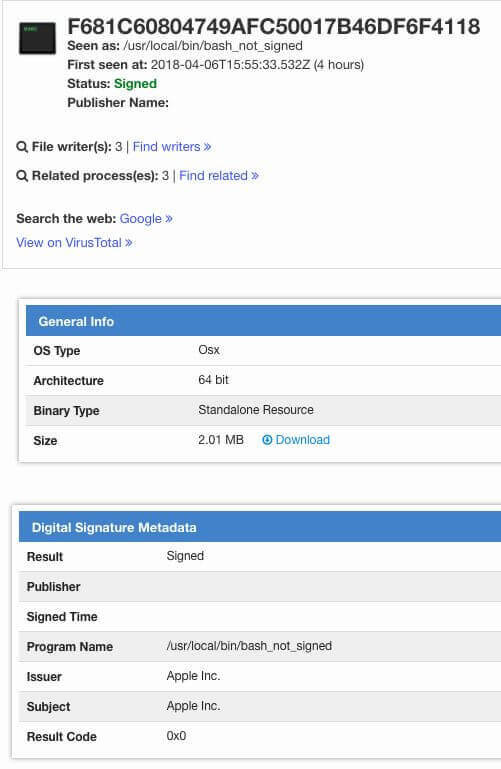

Virus Total – Example of bash_ppc_adhoc before patching by Virustotal:

Disclosure Timeline

February 22, 2018 – Submitted to Apple a report and a POC that was able to bypass third party security tools.

March 1, 2018 – Apple responded that third party developers should use kSecCSCheckAllArchitectures and kSecCSStrictValidate with SecStaticCodeCheckValidity API and developer documentation will be updated.

March 6, 2018 – Submitted a report and POC to Apple that bypasses both flags and “codesign” strict checking.

March 16, 2018 – Submitted additional information to Apple.

March 20, 2018 – Apple stated they did not see this as a security issue that they should directly address.

March 29, 2018 – Apple stated that documentation could be updated and new features could be pushed out, but: “[…], third-party developers will need to do additional work to verify that all of the identities in a universal binary are the same if they want to present a meaningful result.”

April 2, 2018 – Initial contact with CERT/CC and subsequent collaboration to clarify the scope and impact of the vulnerability.

April 9, 2018 – All known affected third party developers are notified in coordination with CERT/CC.

April 18, 2018 – Last contact with CERT/CC recommending that a public disclosure via blog post is the best for reaching remaining third parties that use code signing APIs in a private manner.

June 05, 2018 – Final Developer contact before publication.

June 12, 2018 – Disclosure publication

In closing

Thanks to all the third party developers and their hard work and professionalism to address this issue. Code signing vulnerabilities can be particularly demoralizing especially for companies that are trying to provide assurance beyond the default state of operating system security. For those interested in the details to how I found this vulnerability, please attend my Shakacon talk next month in Oahu, Hawaii.