Oktane19: OAuth: When things go wrong!

Transcript

Details

Aaron Parecki: Hello everyone. I'm Aaron Parecki, and I'm very excited to be here and talk to you today about when things can go wrong with OAuth. I do want to add a quick note. They didn't actually ask me to put this in there, but I do want to make it very clear that this does not represent Okta's and I will be calling out specific companies about some things that have gone wrong. I don't mean to pick on them, they are just good examples of ways in which, this stuff is hard. So, it's hard for everybody.

Aaron Parecki: So, a little background on me. I've been involved in OAuth for a long time. I maintain oauth.net, which is the community website for OAuth, and I've also been involved in creating some of the specs that go into OAuth. This is one of the recent ones we've been working on, recommendations for doing OAuth in browser based apps because, again, this stuff is often hard to navigate and a challenge, so it helps to write these things down.

Aaron Parecki: I also recently wrote a book on OAuth and we had a bunch of copies of this here at the developer booth but they are, I think all gone now, so apologies if you didn't get one. This was published in conjunction with Okta, so this talks a lot about how OAuth works and things like that.

Aaron Parecki: So, I wanted to start out with a little bit of background about what OAuth is, why we have this at all, and what problems it's solving. That will help us frame this conversation about when OAuth does go wrong, we can kind of understand some of the context about it.

Aaron Parecki: A lot time ago, we had this pattern on the Internet which would happen over and over again. So every time a new app would launch, like in this case, Yelp, it would ask you, hey let's see if any of your friends are already using Yelp. And it would ask you to enter your email address, and then it would ask you for the password to your email address. Now, I hear some chuckles and like, really? Is this for real. And yeah, this was considered normal at the time. Even Facebook was doing this. Can you imagine if Facebook did this now? This would not fly. We understand now that this is a terrible pattern, but there wasn't an alternative 10 years ago.

Aaron Parecki: So, what the goal here is that this app, like Facebook or Yelp, it's trying to get access to your address book so that it can compare the list of your friends with people who have already signed up for the service. It's trying to get access to this one API, the Google Contacts API. It doesn't need access to your email, and they don't actually want access to your email, it's just that the only way to do that was to have them both together.

Aaron Parecki: So, there's obviously several different ways this could be solved, but also man ways in which, tried to solve and didn't work. Yelp can't just go and ask Google for random person's address book, and the app shouldn't be allowed to ask a user to enter their password and give their password over to google. So what the problem statement here is how can users let this app access some part of their account without giving it their password. Because people did want the end result of Yelp has access to your address book so it can see who else is on the network, and you have all the friend connections already there. People wanted that so bad that they were willing to give their password to this thing asking for it because that was the only way to do it.

Aaron Parecki: This is the problem being solved by OAuth. Now, instead of a type in your password field on these apps, now it's connect with Google. So this is now a much better pattern, and now instead of typing in your Gmail password into Yelp, you can go and log into Google, Google gives you back a thing that you can take over the Yelp and give Yelp this temporary access to one particular part of the account instead of having to give it your entire account credentials.

Aaron Parecki: The thing that ends up getting used in this case is called an OAuth access token, and the access token is basically just a string that represents that a user has allowed an application to access their account. That string is used in API requests like this. So, you can think of that like a key. It's a key that can do just one thing.

Aaron Parecki: It's kind of like a hotel key card. So when you go to a hotel, you check into the front desk. You log into the front desk. You show them your ID and they give you back a hotel key card. That's like an OAuth access token. You take that key card, you go to the hotel door, you swipe it through the door, and the door opens. The door doesn't care who you are. The door has no desire, no need to know your identity or your unique ID or your name or anything. All the door cares about is whether this card can access this door at this time. That's the same thing that happens in OAuth. An OAuth access token doesn't necessarily have to represent a user, although in many cases it does. But it represents access to data.

Aaron Parecki: Okay, so we're going to talk a little bit about how OAuth works and how some of the data moves around in this world, which will help set us up for understanding some of the problems.

Aaron Parecki: There's typically four roles in OAuth. There's going to be the user who has an account at some server and some data that lives over in the API. There's going to be some application that they're trying to use, like Yelp are a web app, or a mobile app, or whatever kind of application that is, and that's called the Client in OAuth terms. And then the OAuth server is the thing that's responsible for creating access tokens and asking the user if it's okay for this application to get an access token. Then of course, once the app has an access token, it can go make API requests, and that API has to know how to check access tokens. So there's a lot of data flying around back and forth, right?

Aaron Parecki: Let's walk through a typical OAuth flow, an exchange, see how this data moves around. Start out at the top. The user is sitting in front of their computer or using a mobile app, and they are going to go and look at this application and say hey, I would like to us this. That's going to be clicking button that says like log in with Google. The application will say cool okay go over so I can get access. Then the user's browser will go over to the OAuth server and say hey, I would like the use this application, it wants to access my photos or my contacts or whatever that is. Assuming the user clicks OK, the OAuth server will create a temporary code, give it back to the user, and the user then takes it to the application. The application is like great, here now can go get a token with this. So the app says, then to the OAuth server, here is the temporary code I got from the user, I would like an access token and the server replies back with an access token. And now it can go make API requests.

Aaron Parecki: So, the interesting thing here is that the way this data moves around is very different between the first part and the second part. I colored these lines down here to call them out here. The lines at the bottom are what we call sent over a back channel. The back channel is the idea of sending data from one thing to a server over a secure connection where the response comes back immediately. It's like your typical ATTP request or rest call, we're very used to that way of sending data. The idea of sending data over the front channel is probably less familiar.

Aaron Parecki: The point there is that because these two things don't necessarily have a way to communicate over a back channel, will actually send that data using the user's address bar. Because it's using the user's address bar, there's many ways that can go wrong, many ways it can fail. The user can modify the data, some other software on the machine can modify data. So obviously there's a bunch of benefits to sending data over the back channel, right? The thing making the request knows what server it's talking to because it checked the SSL cert. That connection is secure, it can't be tampered with. And then the response that comes back is part of the same connection so the thing getting data can already know it's trusted. So, it's like very carefully handing, by hand, data from one to another. Whereas passing data over the front channel is like throwing it in the air and hope they catch it.

Aaron Parecki: So, why do we use the front channel? It turns out there are some benefits still and one of the benefits is it forces the user to be involved in the process, so that you can ensure that the user did in fact give permission for this to happen. And secondly it means that the thing receiving data doesn't need to have an IP address on the Internet. It just needs to be some code running on a device. That lets it work on the phone or lets it work in a browser based app where there is no public IP address for that application. This does of course come with a bunch of risks, so from the sender's point of view, the sender is going to be sending data through the address bar and has no guarantee that it's going to make it to the recipient.

Aaron Parecki: Because it's written in the address bar, it becomes a part of the browser's history, which means it may be synced to the Cloud. So if your signed into Google Chrome, then your browser history is probably synced up to Google then probably synced back down to your other browsers. And then from the receivers point of view, if you received it over the front channel, you can't actually guarantee it came from the right spot. Because somebody else could have thrown you that data, right?

Aaron Parecki: Okay, so this is kind of the set up. We have the flow of all these different parties. Some of the data gets sent over the front channel, some gets sent over the back channel, who's trusting who. So let's talk about some of the ways that things can go wrong in OAuth.

Aaron Parecki: It actually turns out there's a lot of different moving parts here, and a lot of things can potentially go wrong. There's a lot of things to protect against. So, there's a lot of API keys involved here, so you have to make sure they don't get stolen. When those access token issue at the end and applications have to make sure the access token can't get stolen. While sending the data over the front channel, there's that redirect that happens which means you have to make sure that can't be intercepted or intentionally redirected. Then there's still phishing.

Aaron Parecki: OAuth is not immune to phishing attacks. They just look a little bit different in the OAuth world. There are so many tricks in this space, and so many things to be aware of that there are actually multiple documents dedicated to documenting them. So, starting with the RFC-679, which is the OAuth course book. There's a section in there that talks about several common threats and ways to avoid them. That was written quite a while ago now, and that was sort of the well known ones.

Aaron Parecki: A few years later there was this 82.52 which talks about some new problems related to tokens and things like that. And then there's 68.19 which is like a document dedicated to just security topics around OAuth. And there's even a new one which is in draft right now, which is a whole host of new things since OAuth has kind of evolved a lot in the last 10 years. So there's a lot there to read and we try to make this stuff easy to read, but there's really a lot there.

Aaron Parecki: So, let's talk about the first example here. And that is from Twitter. In 2013, we saw a bunch of headlines like this. Twitter's OAuth API keys were leaked. The secret OAuth app keys to Twitter's VIP lounge, very sensationalist headline, but fine. What actually happened here, Twitter obviously has like an iPhone app, an Android app, a Windows app, back when Windows phone was a thing. And the way Twitter's OAuth API works is it requires signing every request with a secret. So, you need a secret for the app, so Twitter goes and makes a secret for their iPhone app, makes a secret for their Android app, puts it into the app, ships it to users, and everything works fine, right?

Aaron Parecki: Well, turns out that when you try to put secrets into mobile apps, they're not secret anymore because it is trivial to decompile the app and see what's inside or watch network traffic. Anything that's secret in that app will just not be a secret. So someone was like oh, cool, let's go and collect all of the Twitter API keys and put them on Github, and see what happens. So, this was the leak of all of Twitter's secret API keys were leaked. Yeah, they're not secret and that should never have happened.

Aaron Parecki: So, what's the end result of this? It basically means that anybody can impersonate the Twitter app. That's going to be, maybe not the end of the world. You're not going to get access to somebody's data, but a developer can create an application that will appear to users as it were the real Twitter app when it goes through the OAuth process. Probably more importantly, this was around the time that Twitter was starting to lock down their API and impose rate limits for new applications. Which really meant that the end result was a developer could go and use the Twitter API for their own accounts without any rate limits because they could pretend to be the Twitter app instead of having their own developer credentials that had more strict rate limits.

Aaron Parecki: Okay, so what's the moral of the story here? Don't put secrets in native apps because you can't. They're not secret. I wrote a blog post a couple of months ago about this, and the link is down there, or just Google, API keys aren't safe in mobile apps and you'll find it. That talks basically about, it goes through a couple of ways that you can very easily see how it is not safe to put secrets into mobile apps in case you need to cite this for some coworkers who maybe don't understand it yet.

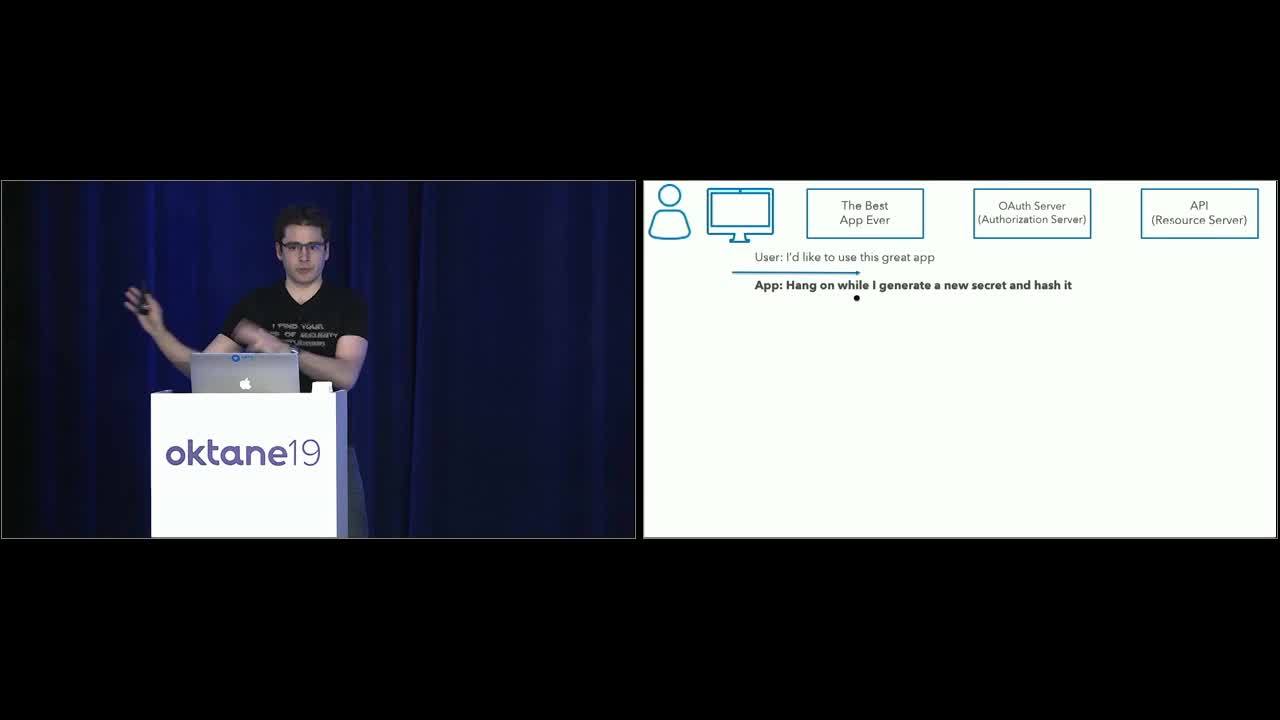

Aaron Parecki: How do we solve this in OAuth? Turns out this is actually one of the reasons that OAuth1 was scrapped and replaced by OAuth2. Twitter still uses OAuth1, they haven't migrated yet. So in OAuth2 with have a solution for this. Which is, we don't use client secrets for mobile apps. Instead we use PKCE. So, P-K-C-E is pronounced pixie, stands for proof-key for code exchange. It is an extension to OAuth and basically what it does is it takes the place of the need for a client secret in the OAuth exchange. So, given the authorization code flow that we saw at the beginning, all of that still applies, we just do something before it starts and right at the end. Let's walk through that really quickly.

Aaron Parecki: Here's the flow diagram we saw before. Starts out the same, user says cool I would like use this application. Before the app sends the user to the authorization service, it says okay, cool, hang on, I'm going to go make up a new secret. Right now. So, it generates a new secret every time, and then importantly, it hashes it. The trick with the hash is that a hash is a one way operation. So if you think of 10 random numbers and write them down, add them all together. Then give that number to somebody. They won't be able to tell you what 10 numbers you wrote down, right? Because it could be several different versions of 10 numbers. So, it's like that but cryptographically secure. That's not a good hashing function. Don't use it in production.

Aaron Parecki: But the point is, this app with generate a secret, and then hash it, then the hash is what is sent in the URL. So the hash is the thing sent publicly in the browser, the user can see it, but it's fine because nothing can reverse engineer it. Then the user goes over to the OAuth server and says hey I'm trying to log into this app and here's the hashed secret it gave me. Then the OAuth server replies back cool, here's the temporary code, that's the same as before. Nothing changes with that step. The device then goes back to the app and says here's the temporary code, please use this to get a token. And now here's where it's different again.

Aaron Parecki: This time, when the app gets the code, it doesn't have a secret, so anybody who steals the code could normally exchange that for an access token. But because we're doing PKCE, the app has to also include the plain text secret that it used to generate the hash. Remember, this is the first time the secret is sent anywhere, so it didn't have a chance to get intercepted ever. Because it hasn't ever been sent. This is being sent over a secure channel so there's again, no way to intercept it. The server then says cool, let me hash that secret and check if it matches the one I saw at the beginning. If it matches, it knows that that code wasn't stolen. And now everything continues as normal.

Aaron Parecki: So, thankfully you don't have to remember this usually because it's all handled by the library that you're using. So, if you're using the Okta STK, the Okta STK does this for you. If you're using a server that doesn't a library of their own, AppAuth is a good library to use as well, and this will handle that hashing stuff for you.

Aaron Parecki: Next example, stolen access tokens from Facebook. This was a recent one. This was in 2018. You probably remember these headlines. It was like 50 million Facebook accounts were exposed or hacked or however they were calling it. Because this is Facebook, and because this was 2018, there was a lot of eyeballs on Facebook and everybody was wondering how this happened. Facebook decided to actually publish a surprising amount of detail about the hack, so we can actually go and look at what happened in detail, and try to understand what was going on. I personally don't think these kinds of headlines are very useful but if you go and actually look under the hood, and find out what actually happened, we can learn something from it.

Aaron Parecki: It's going to take a couple of steps to build up to this, so bear with me here. The problem that happened with Facebook was actually three different bugs stacked together. And each one by themselves weren't that bad, maybe weren't a deal breaker, wouldn't have led to a 50 million account compromise. But when combined, this is what happened. S

Aaron Parecki: o, first bug. As you know, when you go to your own Facebook profile, you can see what your profile looks like to other people. Which is actually a very cool feature and it's like, good on Facebook for doing that, because that's a pretty cool thing that helps people understand better about their own privacy controls that Facebook is giving them, right. So, great feature, but if you were on your profile, you could go and say like view as, your friend or your mom or whatever, and then that was supposed to be a read only view of your profile. There was a bug where the incorrectly put a little box that would let you post a video to your timeline. Because you know when you go to someone's page you can post a video to their timeline on their birthday. There was a bug where this view as feature showed that box. This doesn't sound that bad, right? Like no big deal. It just incorrectly showed a input field. So, hold that one in your mind for a little bit.

Aaron Parecki: Second bug, unrelated. They made a new version of the video uploader, which was the thing that would pop up. And that incorrectly generated an access token that had the provisions of the Facebook mobile app. Normally this video uploader is supposed to have permission to just upload a video, right? So you can imagine, like, whatever you're doing with your access tokens to control the capabilities of that access token. It was supposed to be limited to the ability to post videos. Instead, it had permissions of the Facebook mobile app, which means basically it can do anything. Because the mobile app can do almost everything. Okay, so hold that one in your mind now.

Aaron Parecki: Third, when the video uploader appeared-

Speaker 2: Uh oh.

Aaron Parecki: Uh oh is right. It generated the access token not for you as the viewer, but for the user that you were looking up. So again, by itself, this wouldn't necessarily be a huge deal. Because it was supposed to have generated an access token that could only upload videos. But because of that other bug, it then had the permissions of the Facebook mobile app. So, you stack all these three things together and you end up with this. You can go to your profile, use the view as feature to see what it your profile looks like to somebody else. You would end up with an access token that belongs to that user, which has the provisions of the Facebook mobile app. Which is just like holy crap. And that excellently, is a complete account takeover and you can do anything that that user can do.

Aaron Parecki: That is how that spread. So, how do you fix this? Well, this is tricky because there were three different things interacted in ways that nobody expected that sacked up to cause this bug. But I think that the core of the problem is that, essentially Facebook was allowing their own internal components to impersonate other internal components in ways that were not appropriate. So, treating your own components of your applications the same way that you would treat third party applications, I think wold help avoid this kind of situation.

Aaron Parecki: Because if you imagine, somebody had to write code that would let this part of their internal app swap out and pretend to be this other part of their app. That just shouldn't have ever been written. That kind of code shouldn't exist in your application. And if you're using OAuth Connect properly and making sure every component is treated separately, you won't get into that situation. And if you are treating all of your internal development teams as third parties, it's even easier to keep that from happening, because everybody ends up being very separate and isolated, and you don't share data and mix it inappropriately.

Aaron Parecki: Alright, so example number three. JSON Web Tokens, J-W-T or also pronounced jot, there was problem with many, many libraries for JSON Web Tokens, and this sort of all blew up in 2015. So, JSON Web Tokens, let's back up a little bit; what is a JSON Web Token? It is an encoding mechanism for assigning data, transmitting data from one to another in an assigned way. But it is also often used as a way to encode access tokens, and it is used as an OpenID Connect ID token. This is what a JWT looks like. It looks like nonsense, but it's not. It's also not encrypted, it's just BASE64 encoded JSON data. So, if you look closely, you'll find two little dots in there, and if you split on the dots it turns into three parts. And the three parts are a header, and a payload, and the signature.

Aaron Parecki: So, the header contains information about the token. The middle part, the payload, contains the data you actually care about, like user ID, the groups they're in, the expiration dates. If it's an access token it'll include like scope information, and then the bottom is a signature. So the way JWTs work is the thing that creates one has some sort of secret, usually a public-private key pair, it will create the payload that it wants. It'll say alright, I'm going to sign this with this signature algorithm, and then use it's private key to create a signature, put that at the bottom. And then this all gets BASE64 encoded.

Aaron Parecki: Then, when somebody wants to validate the JWT, it will read the JWT, and then be able to know that if it was signed by the signature method it goes and finds the public key and checks the signature. That's all great. The problem with the libraries were that they were using the header algorithm here to determine how to validate the token. So, if you think through this for a minute, you look at this in your code and say okay, is this valid.

Aaron Parecki: So far it's external inputs, so you can't trust it initially. So you have to validate it, obviously. The problem is that, if you look at the algorithm to check how to validate it, you're using unvalidated data to check if it's valid. So the attack here is that, the attacker could just change the algorithm and tell the library to validate a different way. The subtle attack on this was that if you change rs256, which is an asymmetric signing algorithm, to hs256, that is a symmetric signing algorithm, then an attacker could actually use the public key component as a shared secret, modify data, create a signature, put it back, make a new JWT, and give it to somebody and the library would think it's okay.

Aaron Parecki: The more blatant problem here is that there was also a signing algorithm called 'none' which means there is no signature. I don't know why this was a good idea, at all, but, the attacker in this case could just make up whatever stuff they wanted in the payload, change the algorithm to 'none', give it to some server, the server would be like cool, how am I going to check if this access token is valid, well, the signing algorithm says none, and I don't see a signature, so yeah, checks out.

Aaron Parecki: Yeah, so, I guess the moral of the story is don't let the header determine the way you're going to verify the JWT because that is unverified, untrusted input. And just the same way that you don't trust external input in regular web apps and you have to validate all external input, this is the same thing. This is external input until you validate it.

Aaron Parecki: Thankfully most, or I'm pretty sure all of the JWT libraries fixed this in 2015, 2016, they all published updates and the fix is that when you're validating a JWT, you now, in your code, have to tell the library which algorithms you accept, so you can still shoot yourself in the foot if you say that you allow the none algorithm. But if just only ever have one signing algorithm, you just put that in the thing and now you're fine.

Aaron Parecki: Alright, last one. This is a really subtle one. This was a problem with Google's OAuth API. So, this went around in 2017 and this was called a phishing attack, or an OAuth worm, it had a couple of different names in the press. But here's what happened. First of all, anybody see anything wrong with this picture? This is a real browser, we're really on accounts at google.com, there's a real SSL cert, so, we're not looking at a fake website. Looks pretty normal.

Aaron Parecki: What if you click the Google Docs and this pops down? See anything wrong now? What is docscloud.info? Right? That looks a little bit fishy. So what's going on here? In this case, the attacker went to the Google developer website, registered for an account, went in and made a new OAuth application, and called it Google Docs. Uploaded the icon for Google Docs into that application. Google happily was like cool, here's a new app, here's a client ID, and client secret, and issued credentials to that application.

Aaron Parecki: So it is a real OAuth app, it has it's own client ID and client secret. Then the attacker can take that client ID and use it create an OAuth request URL. That's going to be like we saw at the beginning where the app wants the user to sign in, so it makes that request URL, puts it's client ID in that, and then when you visit that, you'll see a prompt like this, which is Google Docs would like to read and send and delete and manage your email, and manage your contacts. So these are scopes that this app is requesting, in OAuth terminology. What do these scopes let this app do? Well, as soon as one person clicks this, then Google will issue an access token with these scopes.

Aaron Parecki: So, there's no hack here, this isn't really a hack yet. Then, that access token can be used to go and download that person's address book, and then use the Gmail API to send email from their Gmail account to their friends. What are they going to put in that email? So and so shared a file with you, click here to view it in Google Docs. Now when that person clicks the link, open in Google Docs, they're expecting see a Google Docs window, so they just click okay. Then the process repeats. This is why it's sort of like a phishing thing. This isn't even a spam email, the one on the bottom is not spam, it's sent by Google to another Gmail account, thanks to an OAuth access token. So, as soon as one person clicks that, sends the email, then the next person is ready to click okay on the screen because they're expecting to see a Google Doc, and then it just spreads, and spreads, and spreads, and spreads.

Aaron Parecki: And it happens so fast, within 40 minutes of it starting, Google tweeted this out, they were like we're investigating this phishing email that appears to be Google Docs, don't click anything right now. There was also a Reddit thread where this got posted and in that thread, I need to find it again, because it was amazing to watch this unfold in real time. You could see Google engineers chiming in on the thread, being like, oh we're looking at this, oh this kind of strange, what's going on here. You could see the thought process of all the engineers at Google unfold in that thread.

Aaron Parecki: Finally, their fix was just to disable the client ID. Because as soon as the next person clicked that link, then they would see this error. That effectively stopped it from spreading. There was no real attack on the protocol, on OAuth here, so it was also very easy to just shut down all the access tokens because they were all associated with that one application. They're just going to blacklist the app and everything's fine.

Aaron Parecki: But that doesn't really solve the problem. The problem wasn't that someone got an access token. The problem was that users were tricked into allowing this application. And it turns out that is a very, very, very hard problem to solve. Because it is trying to teach users things. And anytime you have people in the mix, things are going to be less secure. So, the real challenge here was that the prompt for permission did not provide enough context to the user to know that something was wrong. And that is the idea of user consent. So, the challenge is designing this consent screen in a way that people can actually understand. You want to inform them what's going on, but you don't want to give them so much information that they just click yes because they didn't read it.

Aaron Parecki: So here's Google's, and this is overall pretty well done, pretty good considering the complexity of Google's API. They have hundreds of APIs and very complicated scopes within those APIs. They do a pretty good job of showing in, but as in this screenshot, how do I know this is the real Slack asking for permission? Anybody could make an app called Slack. So, that's the problem, they haven't actually fixed that yet. But they do a pretty good job of showing the scopes, with progressive disclosure of if you want more information about what this one means, you can get it. So lets look at some other examples of other APIs.

Aaron Parecki: This is the Wonderlist OAuth consent screen. I like that they have this on the left, this app can do these things, and it can't do these things on the right in red. But I don't think it's actually very well branded. If this suddenly appeared because I clicked something, I wouldn't actually know I was on the Wonderlist website. Here's Flickr. Flickr is one of the older OAuth APIs, they were a part of building the spec. And they again do that, here's what the app can do, here's what it can't do, they explicitly call out deleting photos as a permission that apps have to request, and show the user that they won't be able to delete photos. So those are all good things. I think this is also kind of a dated look for websites now, but it is functional.

Aaron Parecki: Here's Spotify. I don't even know what to look at first on this screen. The permissions it's requesting are actually written in two different font sizes, and I don't know why there's like a privacy policy blurb in the middle there, so pretty much if anybody sees this they're just going to click the green button to make it go way. Here's Github. Github is another one that has a pretty complicated API, there's a lot of permissions involved in the Github API. And they do a pretty good job of explaining it. They show the user count that's logged in, they show the scopes, they show which organizations are about to have the organization data authorized to be used by the application. And down at the bottom, they actually show you what website the application is running on, so you know where you're going to be sent back to. That is, I think, a really important part of indicating whether an app is legit or not.

Aaron Parecki: Facebook is an interesting one. Again Facebook is a very complicated API with a lot of moving parts and this is a very clear and straightforward screen. The really cool part here is if you click edit the info you provide, you can actually decide that an application should not get certain access that it requested. This mechanism has been built into OAuth from the very beginning. Nothing in OAuth says that just because an application requests some scopes that the server has to allow it. Like, the server is welcome to just issue different scopes at the end of the OAuth flow. And Facebook is one of the few that actually took advantage of that part of the spec.

Aaron Parecki: Fitbit did as well, in a very literal sense. So they have check boxes where you can just uncheck scopes. I think that's pretty cool as well.

Aaron Parecki: So if you look at all these together and try to find what are the commonalities between all of these that make a good consent screen. I think it boils down to this sort of prototype. Where you want to make sure you are clearly identifying where the user is, what service is this, make sure that you identify the third party application, both by name but also by URL, and also probably show the developer name. Show which user is logged in in case you have multiple accounts, and showing the scopes that the app is requesting. And then of course, there's always the allow and cancel buttons.

Aaron Parecki: So, hopefully this has been useful and you've learned a little bit of what can go wrong and how to fix it. There's a lot more information about this at oauth.net/security, links out to those RFCs I was talking about with a lot more reading you can do. OAuth2 Simplified is the book, and I hope you grabbed a copy if you were down at the developer lounge already. Yeah, this is me. Follow me on Twitter, my website's aaronpk.com, and I will be down at the developer lounge for the rest of the day, so thanks.

Aaron Parecki: We have about five minutes for questions, so we can take a couple. There should be a microphone so if anybody has a question. Also before you leave please do rate this session in the mobile app and I will leave this screen up while we do questions for a couple more development sessions today.

Speaker 2: I have a question. For Okta2, we have API tokens. There isn't a capability where we could limit on what APIs, that the API tokens, can be used for. Is there a plan to build it or make it into Okta in the near time?

Aaron Parecki: Caveat this with I am not fully informed about the roadmap of Okta. I believe there is and I don't know the details. Come down to the developer lounge because I will be able to point you to someone who actually knows the roadmap.

Speaker 2: Okay, thank you.

Speaker 3: With stolen API keys and using PKCE, that didn't seem to solve the problem to me. The problem was really you don't know if a native app is truly the real Twitter app, so, just by hashing a secret I generate doesn't prove that to me, right?

Aaron Parecki: Yes. That is a very subtle point and you are absolutely correct. PKCE solves the stolen auth code problem, it doesn't solve the client authentication problem. There are a couple of things you can do in terms of stronger validation of redirect URLs and using the app claimed URLs instead of custom schemes, to sort of help get closer to stricter authentication for the client.

Aaron Parecki: But ultimately, true client authentication is almost impossible to solve. Luckily, that's also less of a problem than the problem of getting authorization codes stolen during the flow. And PKCE does completely solve that part, which is a bigger attack surface, and is thankfully then solved very cleanly by PKCE.

Speaker 3: Gotcha, thanks.

Speaker 4: You didn't mention app attestation. And I've seen that a few times in the OAuth specs. Can you describe the threat that app attestation tends to mitigate?

Aaron Parecki: No. But come ask me later. There's a lot experimental work that we consider not quite in production, ready for production yet, and I think that kind of is closer to that side of things. But we can talk about it later.

Speaker 5: Hello. For the JWT header issue, couldn't the issue have been solved by including the header in the signature? While creating the signature? Because you-

Aaron Parecki: Sorry, I didn't quite understand that. Including the header in what?

Speaker 5: Like, when you're creating a signature, instead of not just including the claims, if you include the header as well, right? Then if you tamper with the header, then the signature would change, right?

Aaron Parecki: Right, but the problem is you need to take a chunk of data to sign, to check, and that data that you take is untrusted until you check it. So even if the header was part of the signature, anybody could modify that header and modify the signature, to make a new JWT, which would validate it.

Speaker 5: But then you don't have the private key to create the signature, right?

Aaron Parecki: Right, so there's no way to spoof it with a private key. The problem here was that you could change the algorithm, tell it to validate it differently.

Speaker 5: Hm, okay.

Aaron Parecki: I think there's one more question.

Aaron Parecki: Nope, great. Okay. Thank you all for coming. Thanks so much. Don't forget to rate this in the app, thanks.

In this talk you'll learn about many common security threats you will encounter when building microservices using OAuth, as well as how to protect yourself against them. We'll talk about a few recent high profile API security breaches and how they relate to OAuth. The talk will cover common implementation patterns for mobile apps, browser based apps and traditional web server apps, and how to secure each. We'll also cover the latest best practices around OAuth security being developed at the IETF OAuth working group.