Oktane20: A Developer’s Guide to Docker

Transcript

Details

Lee Brandt: Hello, and welcome to A Developer's Guide to Docker. My name's Lee Brandt. I'm a developer advocate at Okta. This is meant to be a one-on-one Docker talk for developers, just what developers need to know to get started with Docker. So if you're pretty familiar with Docker already or, god forbid, you're a dev ops engineer, you're going to want to lean on something sharp in about 10 minutes, so just fair warning.

Lee Brandt: So the question is who am I, to start off with. Again, my name's Lee Brandt, developer advocate at Okta. I've been a programmer since '97, so 23-ish years. I love craft beer, but I'm a recently converted vodkatarian, just trying to live a little healthier life. It's a lifestyle choice. So a lot of people know me from previous jobs, like keeper of keys and grounds at Hogwarts, they know me from that job. Star of Finding Bigfoot, I was on three seasons, they never got a picture of me, they know me from that job. Obviously very serious and very professional all the time. So that's me. Let's see what this Docker stuff is.

Lee Brandt: Okay. Back in the day, when you wanted to get your application onto a server, you'd go to the ops folks and you'd say, "Hey, I want to put my application on a server," and they would say, "That's going to be three weeks," and you think, "Why is that going to be three weeks?" They're like, "Well, I have to create a PO. That's going to take at least a week to get approved. Then I have to order the hardware. That's going to take at least a week to get here. Then, once it gets here, it'll take me about a week to get it racked up, get an operating system on it, get it networked, get it secured, then put any software you need on it, whether that's Apache or Tomcat or IAS or whatever. Then you can put your application on it." You're like, "Well, this is not ideal from a developer's perspective."

Lee Brandt: But it's not ideal from a CAPX perspective either because when the ops folks order a server, do they order the Intel Celeron processor with 128 megs of RAM? No. They buy the NASA server that's got 24 8-core processors in it and they could run NASA from this thing. So once you get your application on it, your server, your ten-thousand dollar server is now sitting at one percent utilization. Once the CFO recovers from their coronary, then you have to try to figure out a better solution, and for the longest time it has been VMs. VMs are a way to ... Now I can put multiple machines on this NASA server that I have and get it up to 70 or 80% utilization. VMs were awesome, and they still are awesome. As a matter of fact, there's whole cloud solutions based around VMs.

Lee Brandt: So how do containers fit into all this? Generally, when I start talking about Docker, one of the first things that people say is ... Well, actually one of the first things that people say is, "I should learn more about Docker." But the second thing that people say is, "Aren't containers just lightweight VMs," and if it helps for you to think of them that way, that's fine, but there's some major differences, and there's a reason why containers are actually preferable to VMs in a lot of situations.

Lee Brandt: Let's take a look at the differences here between the two. So we have VMs. VMs and containers both start with a host operating system that we're going to be running. Then for the VMs, you put your VMs on the host operating system, then you put the OS on each one of those VMs, then any software you need, rather that's Apache or Tomcat or IAS, whatever, to run your app, then you can put your app on it, and you're done. Each one of these requires a separate license for each OS and all that good stuff, right? It starts the same way with containers, host operating system, multiple containers on it, and then you put your app on it, and you're done. Actually, that's a misnomer because containers actually contain your app in it, so it's really just put your container in the host operating system and you're done. How is this possible? It's because the containers actually share the host operating system resources.

Lee Brandt: Now, so do VMs. But when you set up a VM, I don't know about you, but I get decision anxiety, like, "How much RAM should I give this thing? How much hard drive space? How many processors should I give this thing?" It can be nerve-wracking, and if I get it wrong, am I going to have to take the whole VM down, take my app offline while I give it a little bit more RAM or give it a little bit more disk space? The answer is usually yes. Plus, I don't know how many of you watching are dev ops or ops type people, but I'm assuming that Wednesday is the loneliest day of the week for ops folks. That's the day when you have to go through each machine that you have at your disposal, make sure any updates need to be installed, are installed and installed securely or whatever, but, also, you have to buy licenses and maintain each one of these operating systems yourself, just like a regular machine.

Lee Brandt: Containers actually come with everything they need in the container to run your app, and they use the host operating system resources. Now, this means that it makes it a little bit difficult to run things like ... You can't run a Windows container on a Linux machine because at its base, Docker is a Linux VM. Now, you can run Windows containers on Windows machines because there's a passthrough that allows it to use the Windows operating system resources. This is where containers actually end up outshining VMs at certain points. It doesn't mean VMs don't still have a place. It's just there's some places where containers are a much better option.

Lee Brandt: The other thing about VMs versus containers is you've got your VM here with your app on it and when nobody's using it ... Let's say it's a US-only type app, so from about 6:00 in the afternoon to about 8:00 in the morning, nobody's using it overnight. Well, how much resources is the VM taking up? Whatever you gave it, right? If you gave it 100 gigs of disk space, it's still taking that 100 gigs of disk space. It's still taking those four processors you're giving it, still taking up the four gigs of RAM you gave it, so none of that can be reclaimed by the operating system. If another VM running on that host OS needs it, you're just done. It can't. A container runs a lot like an application, so when nobody's using your app, it takes almost no resources. Now, it runs like an app in that it takes as little or as much resources as it needs, but you can cap that and say, "This container can never use more than two processors," or, "It can never use more than four gigs of RAM," whatever that case is. That way, a denial of service attack on one container doesn't bring the whole host operating system and all the containers on it down.

Lee Brandt: But, basically, a container takes as little or as much resource as it needs, which means that when nobody's using that app, those resources, those CPUs, that RAM, that disk space, is available for other containers on that host operating system to use. So it's super, super helpful when it comes to running multiple containers on a host operating system because let's face it, have you ever tried running two VMs on your local machine? Super responsive, right? I've run 20 containers on my local machine to test out swarm and kubernetes, works just fine. My host operating system is still super responsive because the containers for the most part aren't really doing anything. They don't have a load on those containers, on those apps, so they're not taking up very many resources.

Lee Brandt: So how does Docker fit into all this? Well, Docker and CoreOS, many years ago, came and standardized the container. Now, you might think, "Okay, that's cool they created a standardization for containers." But it was huge for the industry because now, with a standardized container, I as a host operating system manufacturer can make sure my host operating system has everything that it needs to run Docker. Also, it creates a whole ecosystem around it, like kubernetes, swarm, all these Docker container orchestrators, Apache Mesosphere. All these things exist because the containers became standardized, and now anybody can support them and write software that helps with containers. So that was huge.

Lee Brandt: Now, I know what you're thinking at this point: "Come on, man, let's just get to the demo. Just show me how all this stuff works." So let's take a look at that now. Okay, so before we get to the demo, let's take a look at Docker Hub. It's just hub.docker.com. Now, you don't have to sign up to get to any of this stuff. I just want to show you. Basically, when you get started with Docker, there's two things that you need to be aware of, two terms you need to come to terms with, so to speak, and that is images and containers. Now, Docker Hub is a place to store images. Images are basically ... If you've done any object-oriented programming, you know that the difference between a class and an object is a class is really a blueprint for making a bunch of objects. You can think of images as the class that is made to stamp out a bunch of containers from it. Really, it's a container frozen in time that allows you to then hydrate multiple containers from that.

Lee Brandt: So if I wanted to take a look at the NGINX container, for instance, I can come here, and generally it will be prefixed by the company's name. You can tell it's the official build of NGINX. Docker Hub is the place to store images so that you can then share them with other people. Now, you can create your own internal Docker Hub. For those of you whose company doesn't like to have anything off-premise if they can avoid it, you can create your own Docker Hub internally, and it's just fine. It's just, by default, Docker assumes you're using Docker Hub to store your images.

Lee Brandt: If I need an image that I don't have on my local machine, I get that by doing a Docker pull. So I would do a Docker pull, and let's do NGINX, oh, and what's the latest version of this, 1.17.9. now, if I leave that off, the way that I refer to this image is by its name and its tag. If I leave the tag off, it's going to assume that I mean latest, which is actually 1.17.9. But don't ever, ever, ever, ever, ever, ever, ever, ever, ever, ever use latest in production. Remember that containers are meant to be destroyed and recreated. That's actually how you update a container, is by destroying the container and replacing it with a new one. So today's latest might not be the same as yesterday's latest. It might not even be the same as 10 minutes ago's latest. So just don't use latest in production. As a habit, I just try not to use latest anywhere. So 1.17.9. And when I do a Docker pull, what it's going to do is it's going to pull down three files, okay, and then it's going to tag one. So we'll take a look at what that means here in just a minute.

Lee Brandt: But let's go ahead and if I want to see the images that I have on my local machine, it's just docker image list. Now, these are the new commands. The older commands are still in use quite a bit, and there's lots of content out there, blog posts and things like that, that use the old commands, so I'll try to make sure I mention both the old command and the new command so that you're not confused when you get out there and start reading blog posts about it. So the old command was docker images. The new command is docker image list. Now, we can see I've got NGINX 1.17.9 on my local machine. It's got an image ID, okay, and its size is 127 megabytes, and it was created two weeks ago. Okay?

Lee Brandt: So if I wanted to create a container from this image, I could run a docker run command, where I do -it, the name of the container that I want to run. So this is just a docker run command. I'm going to give it two switches, -i and -t. So this is just a way of combining the two of them. I could put it like that as well, -i and -t. Now, the -i says that I want it to be interactive, which means once you start it up, I want to be able to interact with it, and I'm going to do that with the -t, which is create a pseudo TTY shell into that Docker container, and now I can interact with that via my shell. So I want to base this container off the NGINX 1.17.9 image, and then this last argument, /bin/bash, is the command that I want running at process ID 1.

Lee Brandt: Now, if you're not a Linux person, the process that runs at PID 1 or process ID 1, on most Linux machines, is a program that I always forget the name of it. I believe it's called init, and what it does is when you start up a machine, it starts up all the stuff that's supposed to start up when the machine starts up, and when you're shutting the machine, it's the last thing to be shut down because it's whole job is to make sure it can try and stop everything and shut it down gracefully as the machine's coming down. For containers, containers are meant to be a one container, one application type thing. They're meant to go together. So, in this case, the thing running at process ID 1 is going to be the Bash shell, which means that this is also the thing that keeps the container running. So when the Bash shell, in this case, exits, this container will stop running, so we'll see.

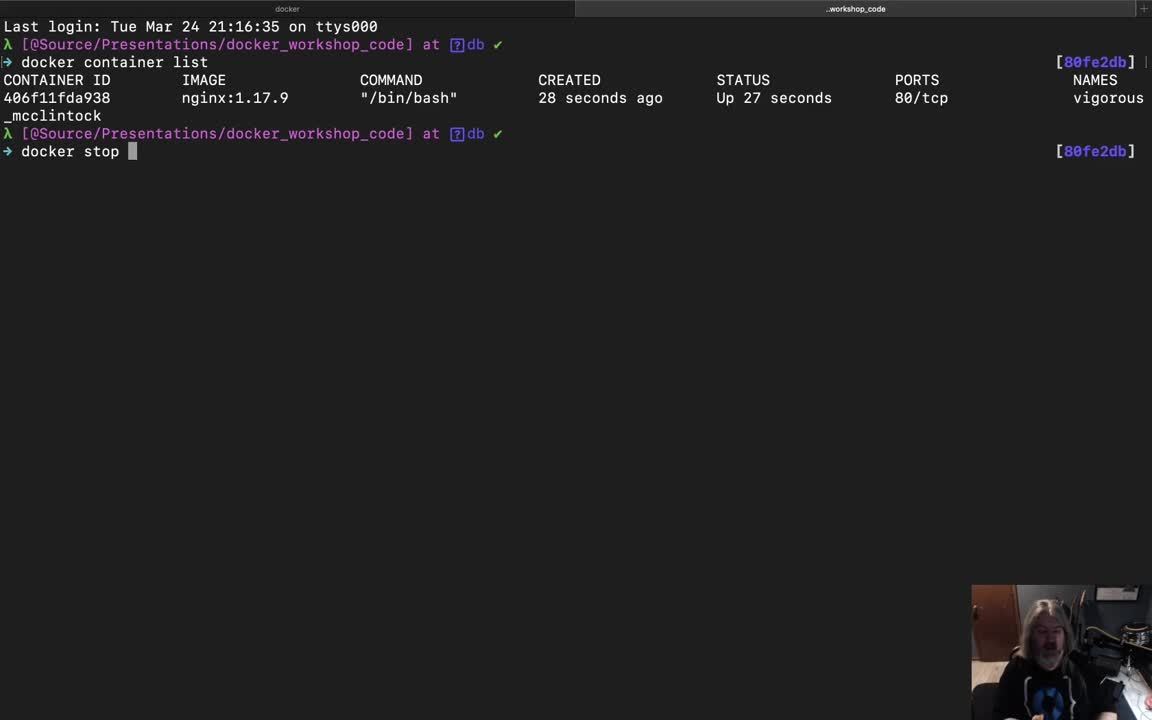

Lee Brandt: I'll go ahead and run it, and we'll see that it came up pretty quick here, and now I'm root@406f11fda blah blah blah, right? So, now, let's go ahead and we'll create another terminal window here. If I want to see the list of containers running on my local machine, the new command is container list, docker container list. That will show me the containers that I have running. By the way, the old command is docker ps. But here I have a container ID, and we look at this 406f11f blah blah blah blah looks remarkably like this hash there because it is, so that's the container ID. There's the image that it's based off of. Here's the command that's running it, process ID 1. When it was created, 28 seconds ago. It's been up, that's its status. It's been up for 27 seconds. Port 80 on TCP is open. That's just part of the NGINX container. It's got port 80 open. It's a reverse proxy, so that makes sense.

Lee Brandt: Then the name of it is actually vigorous mcclintock. Because I didn't give it a name in my command line command, it made up a name for me, and it makes up names based on a list of adjectives and famous computer scientists' last names. So I don't know who McClintock is, but I'll have to remember to look that up later. Sometimes I'll do this. Just because I'm a dork, I'll run a container, usually in NGINX because it's small, and I'll see, "Oh, arduous anderson. Okay, let me go look up computer scientists last name Anderson." You'll get things like angry babbage and hungry bourne. Just know that if you don't give it a name, it's going to pick a name for you.

Lee Brandt: Now, I've got this thing running, and if I come over here, I can actually do regular Linux commands, and we'll see here I've got just a basic regular Linux machine. Now, I can stop it one of two ways. I can come over here and do a docker stop and refer to the container. Now, I can refer to the container in one of two ways, by its name, vigorous mcclintock. Now, that's all right for this one. It's not so bad. But some of the computer scientists have some names that are pretty hard to spell, so it can be kind of hard to type. So use the container ID. Well the container is 406f11 blah blah blah blah blah, right? But you only have to actually use enough of the container ID to make it unique in this list. In this case, I could do docker stop 4. It's the only one in the list that starts with 4. Now, out of habit, I just do 406. I just do the first three. That's usually enough to make me unique in that list.

Lee Brandt: Now, we're not going to stop it that way. Remember, /bin/bash is the thing that's running that's keeping this machine running, this container running. So I could just exit, and if I exit out of that, it exits, stops the container from running, and returns me to my shell. Now, let's go ahead and close this guy down. If I come back and run my docker container list, we can see I've got no containers running. Now, the container hasn't gone anywhere. It's still there. It's just not running. So if I wanted to see all the containers on my host machine, whether they're running or not, I just add the -a to it. So I'm going to run the old command, docker ps -a. You could do container list -a. That's the new command. We'll see here. Here's my container, 406f11da, NGINX 1.17.9. it was created three minutes ago, and it exited with a code of zero 40 seconds ago.

Lee Brandt: Now, a code of zero means that it was good. It exited in a normal way. It wasn't a crash, anything like that. This is the first place to look when a container crashes. You can go and look up that status code and then do a search for docker status code blah blah blah. You can also run docker logs in the container name, and you can see everything that happened. Usually, there'll be something in there that's an indicator of what happened. If I actually run that here, docker logs 406, we can see that this is what happened. I did an ls -al, here was the output, then I ran exit, and it exited. Okay?

Lee Brandt: So when I come over here and I do my docker psa, let's say I mess something up and I want to get rid of this container. The way that I get rid of a container is it needs to be stopped first. But then you just do a docker rm and then the same thing, 406, or vigorous mcclintock. With most things with Docker, when you run a command like this, if everything worked out like it should, the only thing that comes back to you on the terminal is the argument that you sent into the command, in this case 406. So, now, when I do a docker ps -a, I don't have any containers, running or not. Now, I do have that NGINX image. So let's say I wanted to get rid of that. Let's say I really want an older version of NGINX. I don't want the newest version. So let's do docker rmi, for remove image, and then I do the same thing, either NGINX 1.17.9 or you can also do the same thing with the image ID that you did before, so 667.

Lee Brandt: Now, when I remove this, we'll see that it untagged NGINX 1.17.9. It also untagged this ungodly sha256:2539 blah blah blah, right? It also deleted four files. All images are made up of layers, and the reason that they do this is because let's say I'm using a bunch of different versions of NGINX. Well, they probably all start with the same base, so if I have four different versions of the NGINX image on my host machine and they only differ a little bit, then the only thing that my machine has to store is the main image layers and then the diff layers. This helps with storage so I don't have to store the entire image four times. I just have to store the layers that make up those four images in the diffs. So this is pretty cool, and it's something that we'll come back to at the end here.

Lee Brandt: But now that I've got this, now you've got the basics of a Docker command line. Let's take a look at ... Generally, I wouldn't run a container by doing a docker run. As a developer, I'm probably going to want to use a docker file and docker compose to do that. So let's take a look at how that works. All right. For the most part, as a developer, I don't start containers with a docker run command. I use a Docker file. Now, a Docker file has a special filename. It's Docker file, capital D, no extension. You can name it something else, but then you'll always have to tell Docker what the filename is it's looking for because by default it's looking for Docker file, capital D, no extension.

Lee Brandt: So this is the format of a Docker file. The first line always has to be the from line. So this one I'm basing from node 8.4. This is the image that it's going to pull from Docker Hub if I don't already have it on my machine and base my new image off of. Now, the from line has to be the first line. You can have comments before that but nothing before the from line. If you really are into self-torture and things like that and you want to build one without a base image, you could do from scratch. But, yeah, don't do that to yourself. There's lots of really great images that already have good security built in, and if you stick to the official ones, you're probably going to be good. So this is the official node image based on 8.4, which is important. This is another advantage of containers, is the fact that I have node 13 installed on my local machine, but this container's going to be running node 8.4 because this is an older app.

Lee Brandt: So the first thing I'm going to do is I'm going to copy everything from my local directory to a directory in the container called /app. Then I'm going to set the working directory to /app, which means that everything that follows will be running in the /app folder in the container. Then I'm going to run two commands, and these are two commands that I'm running in two different ways, just to show you the two different styles. So the first one is called shell style, and it's just run and then the shell command that you would normally run, npm install. This runs under the guise of a user, in this case probably root, but it's going to run in a shell under the guise of a user.

Lee Brandt: Where this is known as the exec style, and it's just command and then there's an array, where the first element of the array is the command you want to run, npm, and all the rest of the elements of the array are arguments to that first one. So this is just going to run an npm to start, which I could've easily done with just npm to start like this, but I wanted to show you both styles. This does not run in the guise of a shell or a user, and according to the documentation, it's the one that's guaranteed to run after the container has started up. Now, I've never had a problem with a run command running before the container was ready. Maybe it's just because containers are so fast that doesn't have a problem with that. But, just according to their documentation, that's what it says, CMD, the exec style, runs after the container is up and running.

Lee Brandt: So this is basically what we're going to do. We're going to go and get node 8.4 image from either Docker Hub or an internal one. Then I'm going to copy everything from my local directory to /app, set the working directory to /app so that when I run npm install and npm start, it's running in that app folder, and this is the thing that will be running at PID 1. This is the thing, like the /bin/bash from before, npm start will be the thing that's running at process ID 1.

Lee Brandt: Now, that being said, I don't generally run containers using just a Docker file. As a developer, I use Docker Compose. So let's take a look at our Docker Compose file. In this case, I'm going to do a couple of different things. Every Docker Compose must start with a version, first line, version. In this case, 3.3. Then, services is the other section. Now, I've got two services here, web and postgres, so I want to start up a web server and a postgres container, so these are the two containers that I want to start up with Docker Compose. The image, I want it to be named workshop/nodeapp. The container name, I want it to be called web so I don't get hungry babbage or curious bourne or whatever we got before. The build context is going to be the local directory, which means that's just telling Docker that I want to look in the current directory for a Docker file, capital D, no extension, to build my image off of, and that's where it's going to get the fact that it's basically based off of the node 8.4 image, okay?

Lee Brandt: Now, here's one of the first cool things. I'm going to marry port 3000 on my local machine to port 3000 in the container, okay. I just happen to know that the node 8.4 image has port 3000 on TCP open because that's where node's going to run. All right. So I'm going to marry port 3000 on my local machine to port 3000 in the container. This means that I can go to local host port 3000 and it'll actually be running in the container. The environment, this is setting up just some environment variables. This is the node environment. The database URL, that's what it's going to use to connect to the database. We'll get to that in just a minute. But this is just the connection string that it's going to use to connect to the database.

Lee Brandt: This is the second piece of cool unicorn magic, the first being the ports for developers. I'm going to create a volume. It's a mount volume from my local directory to /app in the container, which basically means it's basically like a symbolic link, so anytime anybody goes to /app in the container, it's going to automatically go to my local directories. So it thinks it's running /app in the container, but it's actually coming to my local directory to run my code, which means I'll be able to make changes to my local code and it'll be reflected in local host port 3000 just like normal, except for everything's actually running in the container. So it's taking advantage of the fact that it's node 8.4 and I can do node 8.4 type things.

Lee Brandt: The last thing is this entry point. Now, this is going to override the CMD in the Docker file and say the entry point's going to actually be npm run dev. That's going to be the thing that's going to be running at PID 1, okay, and if we look at run dev, it's going to be running nodemon. Okay? So that's our web server. The other container we're going to start up ... Yes, Docker Compose can start up multiple containers. As a matter of fact, it's set up to run the entire stack. So, in this case, we've just got a web server and a database that it's going to get data from. So the postgres is going to be based off of image postgres 9.6.3, so if I don't already have it ... Since I don't have a Docker file that I'm building this from, I'm going to be just telling it, "Get the image postgres 9.6.3," and that's what I'm going to be running this container off of.

Lee Brandt: The container name is going to be called db. I'm going to go ahead and marry port 5432 on my local machine to port 5432 in the container. This way, I can run pgadmin and just connect to local host and connect to my KCDC database and the user is kcdc_app, right? So these environment variables will be used to start up the postgres database, and since I've got 5432 married to my local host 5432, I can go to the KCDC database in local host 5432 and it will actually be running in this container, just like normal.

Lee Brandt: These last two are specific to postgres, and, well, Docker, really. So this ./sql folder, I'm going to map this volume to a docker-entrypoint-initdb.d file in the container, which means that when it goes to docker-entrypoint-initdb.d, it's actually going to go into this ./sql server folder or this ./sql folder. Inside this ./sql folder, it's just got a SQL create table, just a DDL script. So this means that when the postgres container comes up, it's actually going to look in that folder and run any SQL that it finds in there. So it's going to go ahead and create that speaker table for me. I can also see the database with test data, things like that, so it's super, super useful.

Lee Brandt: The other thing, and this is probably one of the few times that you would see a volume in production. Volumes are super helpful for development, but there's only a few places that you really need volumes in production. Databases and log files are the two big ones. So database, what I'm going to do is I'm going to map ... I'm going to create a mount volume of /db/dev in my local directory, this guy right here. I'm going to marry that to /var/lib/postgresql/data. Now, anybody who's been doing development for a while knows that databases are really just an amalgamation of files. So what ends up happening is this /var/lib/postgresql/data is where the normal data files live for a postgres database. What this does is it puts all those files in my /db/dev folder. These are all the files that make up a postgres database. What this does, and the reason that you would do this in production, is remember I've told you containers are meant to be destroyed and recreated. So it means that I can actually destroy and recreate this container, and as long as it has this volume, I don't lose any of my data. You don't want to store the data inside the container because when you destroy the container, you'd be destroying all your data as well. So this is a way to do that. And you would do the same thing with log files.

Lee Brandt: So, now, we've got our Docker Compose and Docker dev files. This is actually how I would run a container. So the way that I do this is I get to that directory. Okay, I'm in that directory. I do docker-compose, and in this case, it's looking for a file called docker-compose.yml. Well, we're using the dev file, so I'm going to actually have to give it the f switch, docker-compose.dev.yml up. So the real command is docker-compose up, but I had to give it a file switch because I'm using something other than the standard file name, which is docker-compose-yml. That one I like to keep for my production Docker Compose, okay?

Lee Brandt: So I do docker-compose up. It'll say it's building web, and you'll see these look very familiar, almost like it's pulling it right from the Docker file because it is. Then this is just ... I wish npm and Docker would get together and not make the npm install output red because I always think something went wrong, but this is just the install for npm install and then npm start. But here's the Docker Compose, where it's going to do an up build, built web, and then it created the DB. You can see down here, the database system is ready to accept connections, so everything is set up. That means that I should be able to come over here and go to local host 3000 and see my application. My lovely node application is going here.

Lee Brandt: Now, if you don't believe me that this is running in my local machine but running in the container, we can just change this file and nodemon will get the updates, and when we refresh the page, there's Docker for real. That's the way I develop during the day, is I'll just come in here and I'm making my changes to my file, I hit save, come back over here, refresh, got all my changes, yep, they're good to go, everything's good. Now, the other thing that I wanted to show you is here's pgadmin.

Lee Brandt: Now, I can go ahead and connect to this server. The connection is going to go to local host 5432. The username is kcdc_app because that's what we told it it was going to be here in our Docker Compose, and the database that I want to get to is KCDC, but that's the only one I'm going to have access to. It doesn't have a password, just local. So here's my database, and I'm actually in this database in here. So if I come over here and run this /speakers, it's getting data out of my database from postgres. View data, all [inaudible 00:37:46]. There's my Jim Beam and Jack Daniels coming right out of the database onto your screen. Now, once we're done, I can ctrl+c out of this. Now, I could've put a -d on there to be disconnected from it so it's not running like this, but when I hit ctrl+c, we'll see it's stopping, stopping the DB. Oh, it can't stop the DB because I'm connected to it, so let's go ahead and disconnect from here. Yes, I want to disconnect from that server. Now, I can stop the database. Okay. And we go back up here, we can do docker ps -a again, and here's my two containers. This one exited with a 137, that's the postgres. That's because I killed it. The npm run dev, just ctrl+c is the normal exit for that thing, so it's just 0.

Lee Brandt: Now, I've got these two containers, and let's say I messed something up with the creation of the image or the creation of the containers and I want to clean up. This is something I wish somebody had showed me when I first got started with Docker, is, remember, even if you're on a Windows machine or on a Mac, this is running on a Linux to VM. Docker containers or Docker toolbox itself, the Docker Desktop, runs on a Linux container or a Linux VM. So I can do docker remove to remove the container, but then I can also put the output of another command on here, which is docker ps -a -q, and -a -q actually just outputs the IDs. So if we just look at that, docker ps -a -q just puts the output of all the containers. So if I want to, I just docker rm, and the output from docker ps -a -q will be my input for the docker remove command, and it'll just remove those two containers. So, now, I'm all cleaned up.

Lee Brandt: This is super helpful when you first start messing around with Docker and you create a bunch of containers, some of which don't have names, some of which are messed up, and you just want to wipe the whole thing and just get rid of all the containers that you have on your host machine and start over again. Now, if I do a docker images, I'll see I've got this workshop node app because that's what I told it to call it, the image that it created from that Docker file. So if I want to get rid of that, docker rmi workshop/nodeapp, and it'll untag workshop/nodeapp:latest and it'll delete all the layers in my image. Now, when I come up here and do a docker images or docker image list, which is the new command, I've just got the old two images there, and docker ps -a, nothing running on my local machine.

Lee Brandt: So that is how you use Docker Compose and Docker as your development environment. Now, think of the implimications ... The implications here are that when I start work at a new organization, I don't have to have node 8.4 installed on my machine. All I need is Docker Desktop installed on my machine, and I can then go clone the repo that already has the Docker file in it and then just run a docker compose up with the docker-compose.dev.yml file switch and I'm up and running. I didn't have to install node or npm or any of those other things. I just use the node Docker container.

Lee Brandt: The other implimication here is if you've ever worked in an organization where you have a UI team and an API team and the API team is developing and they're delivering to a test server that all the UI people are hitting, whenever the API team pushes a broken build to the test server, the UI team is stuck. They're just, "Well, API's down. I guess I'm going to get bagels." Right? There's nothing really you can do. In this case, the API team could actually just push a new version of the container up, and I as a developer could do a Docker pull or rebuild my API locally and just run it locally. Then it doesn't matter that they're pushing broken stuff. If they push a broken container, I just get the one tagged before that, the last good known one, and I'm not broke, I can continue to work as a UI person.

Lee Brandt: Same thing with the API people. I could actually pull down the container that has the UI in it and run it against my API and make sure that the data's showing up in the right place and if it's not, maybe it's because I formatted the data incorrectly or something like that. I can actually have the UI running locally, and the UI folks can have the API running locally, and we can actually work independently of each other without stepping on each other's toes or breaking each other from being able to work, which is super, super helpful.

Lee Brandt: The last thing I want to mention is we've looked at the Docker files. If I go in here, I can actually pull out the Docker file that makes up the NGINX container. We talked about layers earlier, so I've got this run, set-x, and addgroup, and all this stuff, but it's all ampersanded together. That's because, remember, I had five layers because I had five commands going on in here. Four layers, so I had four commands going on in here. Every time I run a command, it's going to create a new layer. So think about that when you're creating your Docker files. If I'm going to, for instance, install Apache, I'm probably going to install Apache Utils with it, so I would probably do a run apt-get install Apache and Apache Utils, right? So just think about that. If you ampersand them together, it's only going to create one layer for that, and you want them to be semantic, so you want them to have all the stuff that that layer's going to have that's going to be shared from one to the next.

Lee Brandt: Remember, Docker only stores the deltas, and they all share the same similar layers, right? So if you have a bunch of images that all have Apache and Apache Utils in them, make that a layer. Install that as a run apt-get install Apache and apt-get install Apache Utils. All right. Make that all one thing so that Apache is installed, the Utilities are installed, the user that's going to be running Apache is set up, the security is set up around Apache, and that's all one command. That way, it's all one layer. That way, when you create other images, you can base them off that same thing. They have the Apache and Apache Utils already set up, and that layer is now one layer that's shared among other containers, if that makes sense. It's mostly to save space on your host machine.

Lee Brandt: All right. Well, thanks for joining me today for A Developer's Guide to Docker. Make sure you check us out. Check out the blog at developer.okta.com. If you need to get in touch with me, if you have comments or questions about the presentation, I am [email protected]. You can also follow me on Twitter @leebrandt. Thanks for coming today, and enjoy the rest of Oktane

It works on my machine. We’ve all heard it. Most of us have said it. It’s been impossible to get around it… until now. Not only can Docker-izing your development environment solve that issue, but it can make it drop-dead simple to onboard new developers, keep a team working forward and allow everyone on the team use their desired tools!

I will show you how to get Docker set up to use as the run environment for your software projects, how to maintain the docker environment, and even how easy it will be to deploy the whole environment to production in a way that you are actually developing in an environment that isn’t just “like” production. It IS the production environment!

You will learn the basics of Docker and how to use it to develop.